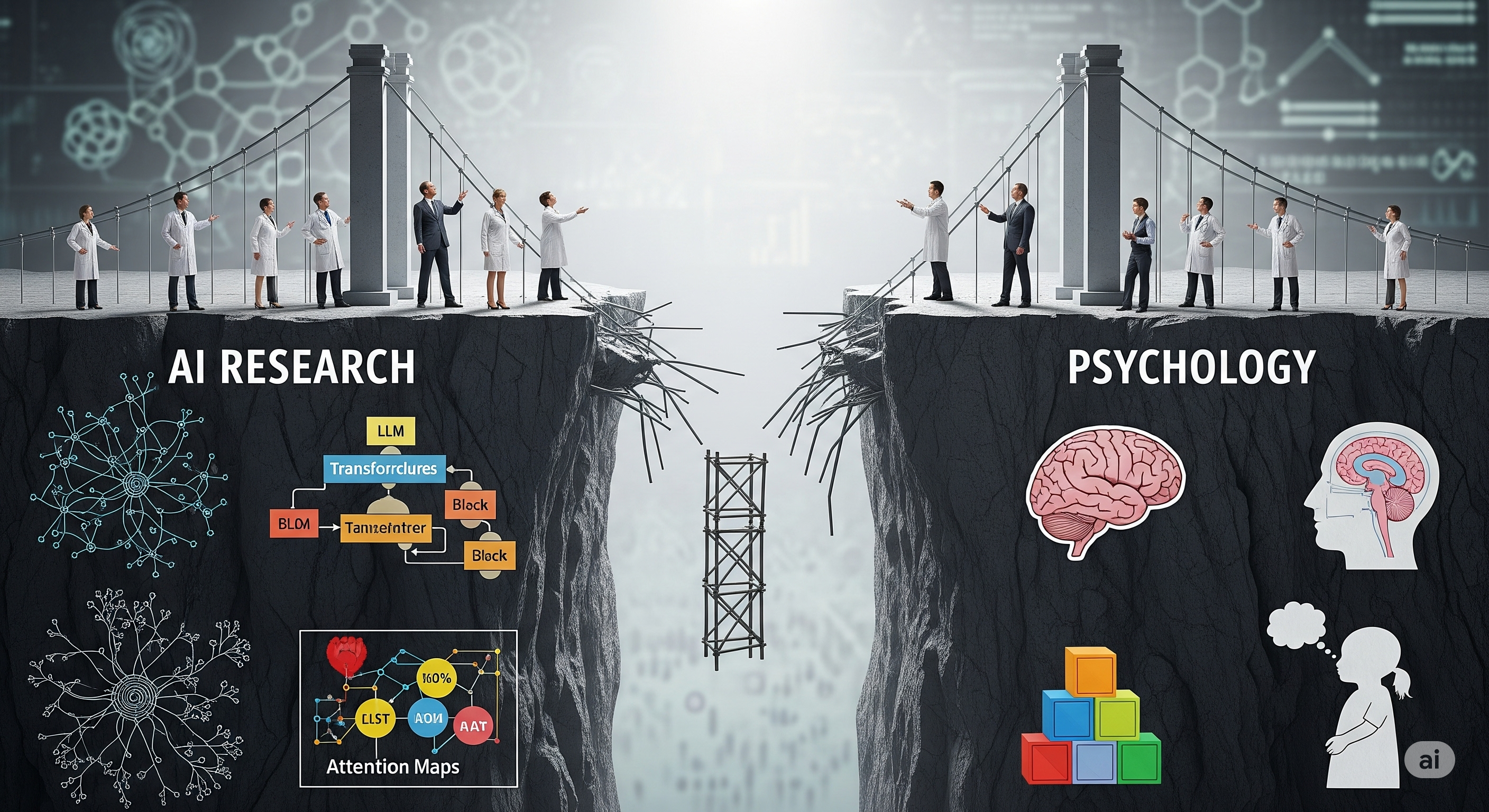

Mind the Gap: How AI Papers Misuse Psychology

It has become fashionable for AI researchers to pepper their papers with references to psychology: System 1 and 2 thinking, Theory of Mind, memory systems, even empathy. But according to a recent meta-analysis titled “The Incomplete Bridge: How AI Research (Mis)Engages with Psychology”, these references are often little more than conceptual garnish. The authors analyze 88 AI papers from NeurIPS and ACL (2022-2023) that cite psychological concepts. Their verdict is sobering: while 78% use psychology as inspiration, only 6% attempt to empirically validate or challenge psychological theories. Most papers cite psychology in passing — using it as window dressing to make AI behaviors sound more human-like. ...