Boxed In, Cashed Out: Deep Gradient Flows for Fast American Option Pricing

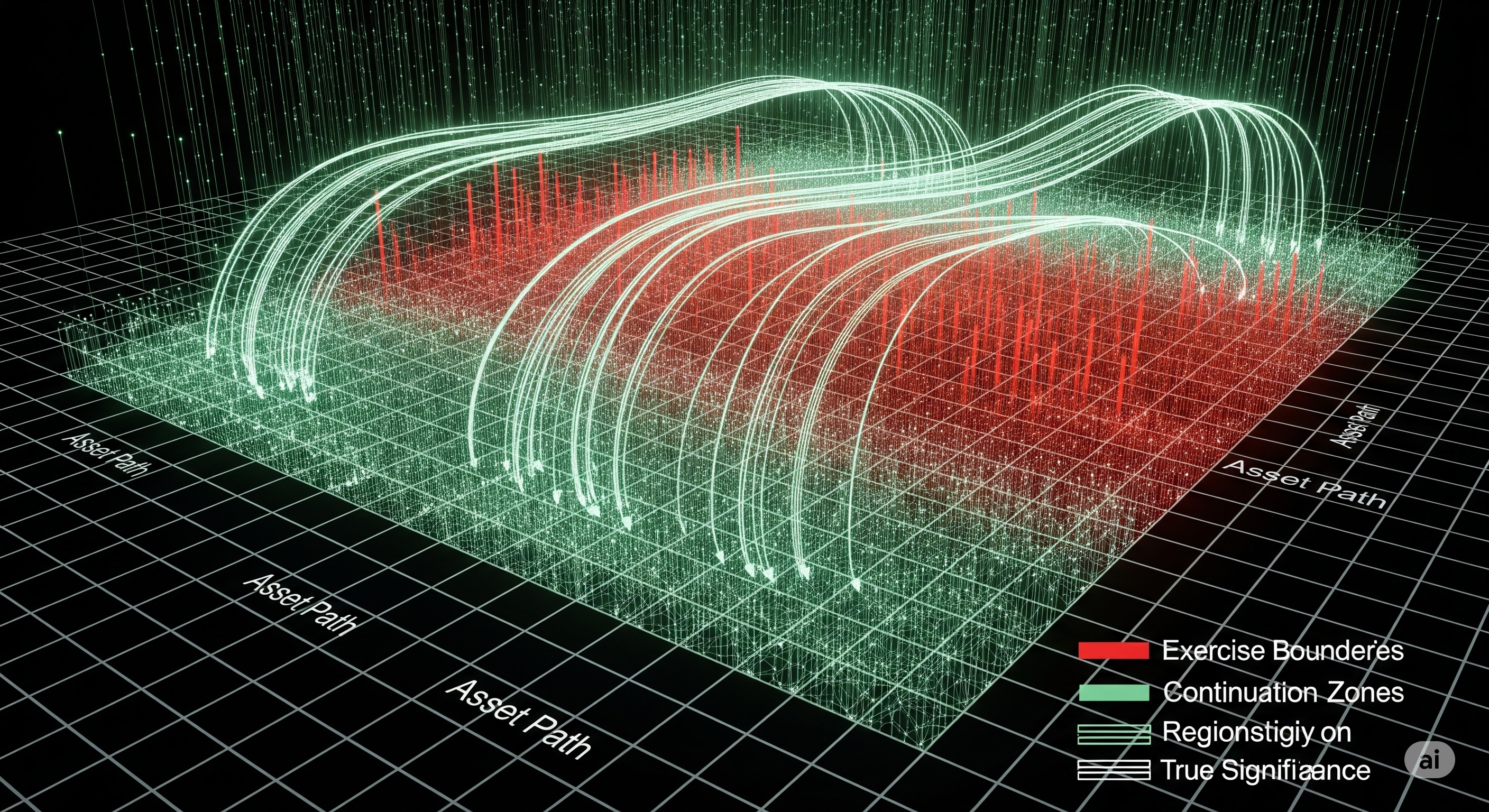

Pricing American options has long been the Achilles’ heel of quantitative finance, particularly in high dimensions. Unlike European options, American-style derivatives introduce a free-boundary problem due to their early exercise feature, making analytical solutions elusive and most numerical methods inefficient beyond two or three assets. But a recent paper by Jasper Rou introduces a promising technique — the Time Deep Gradient Flow (TDGF) — that sidesteps several of these barriers with a fresh take on deep learning design, optimization, and sampling. ...