From Graph to Grit: Diagnosing Warehouse Bottlenecks with LLMs and Knowledge Graphs

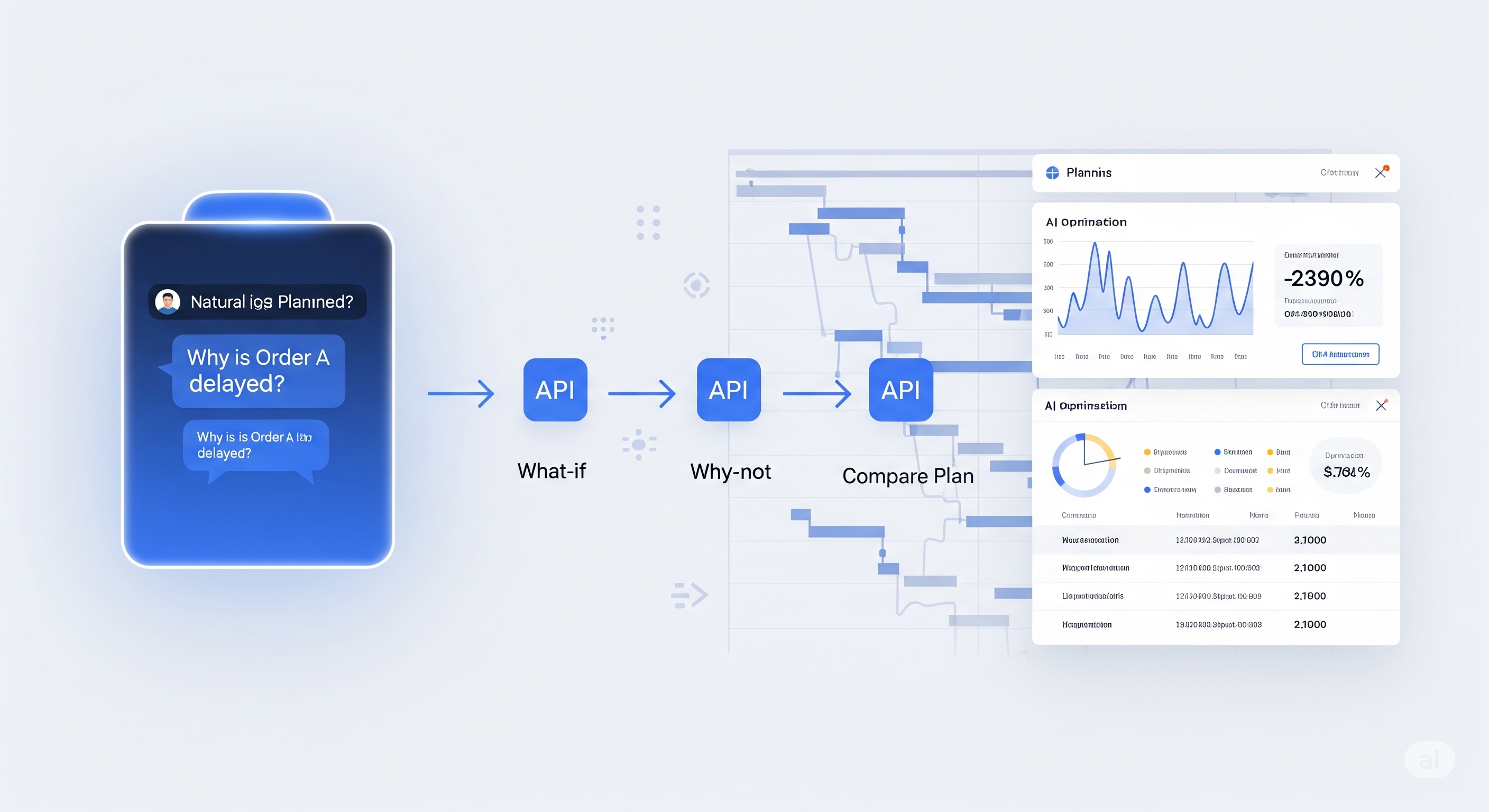

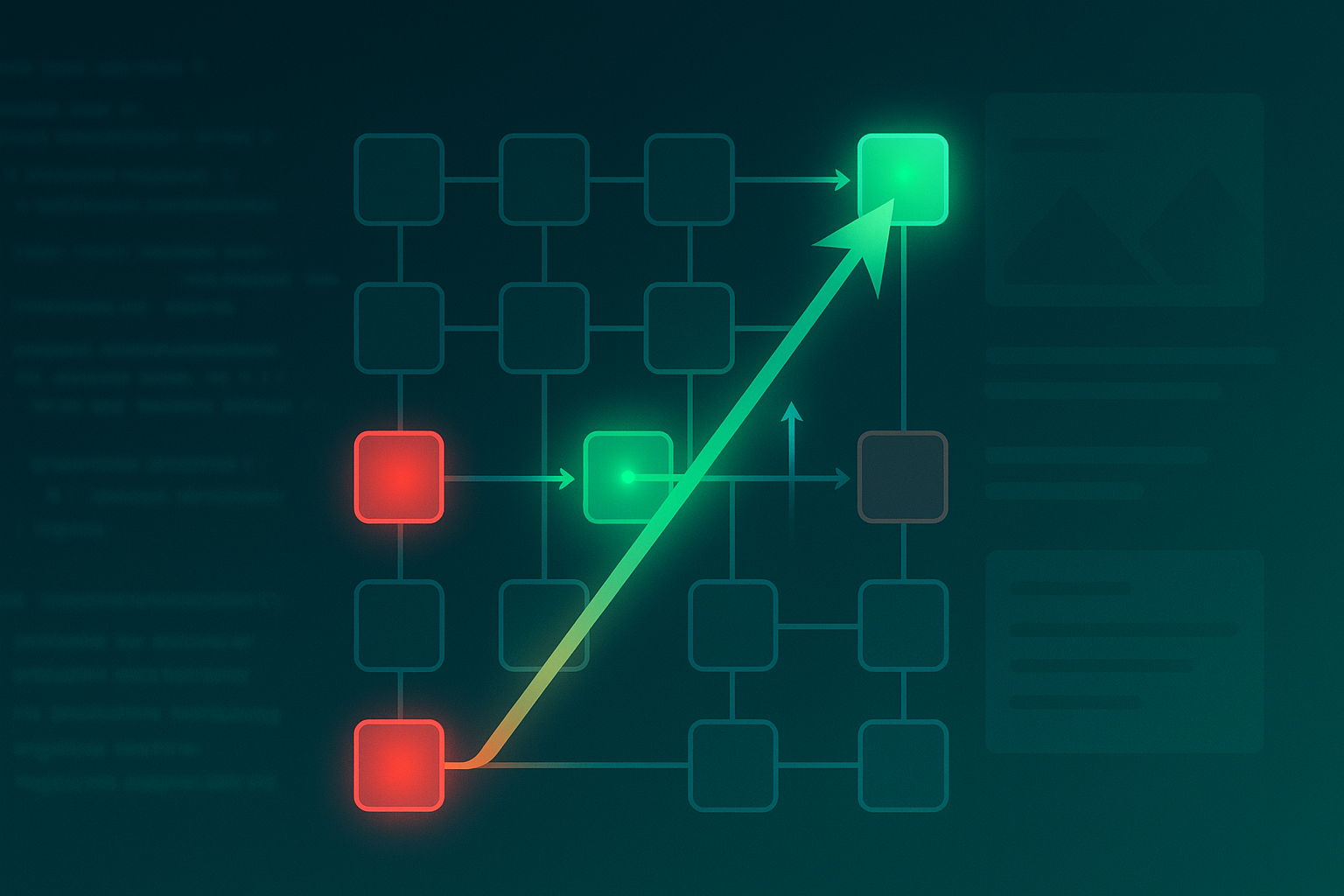

In the age of Digital Twins and hyper-automated warehouses, simulations are everywhere—but insights are not. Discrete Event Simulations (DES) generate rich, micro-level data on logistics flows, delays, and resource utilization, yet interpreting these data remains painfully manual, fragile, and siloed. This paper from Quantiphi introduces a compelling solution: transforming raw simulation outputs into a Knowledge Graph (KG) and querying it via an LLM agent that mimics human investigative reasoning. It’s a shift from spreadsheet-style summaries to an interactive AI assistant that explains why something is slow, where the bottleneck is, and what needs attention. ...