Agents in a Sandbox: Securing the Next Layer of AI Autonomy

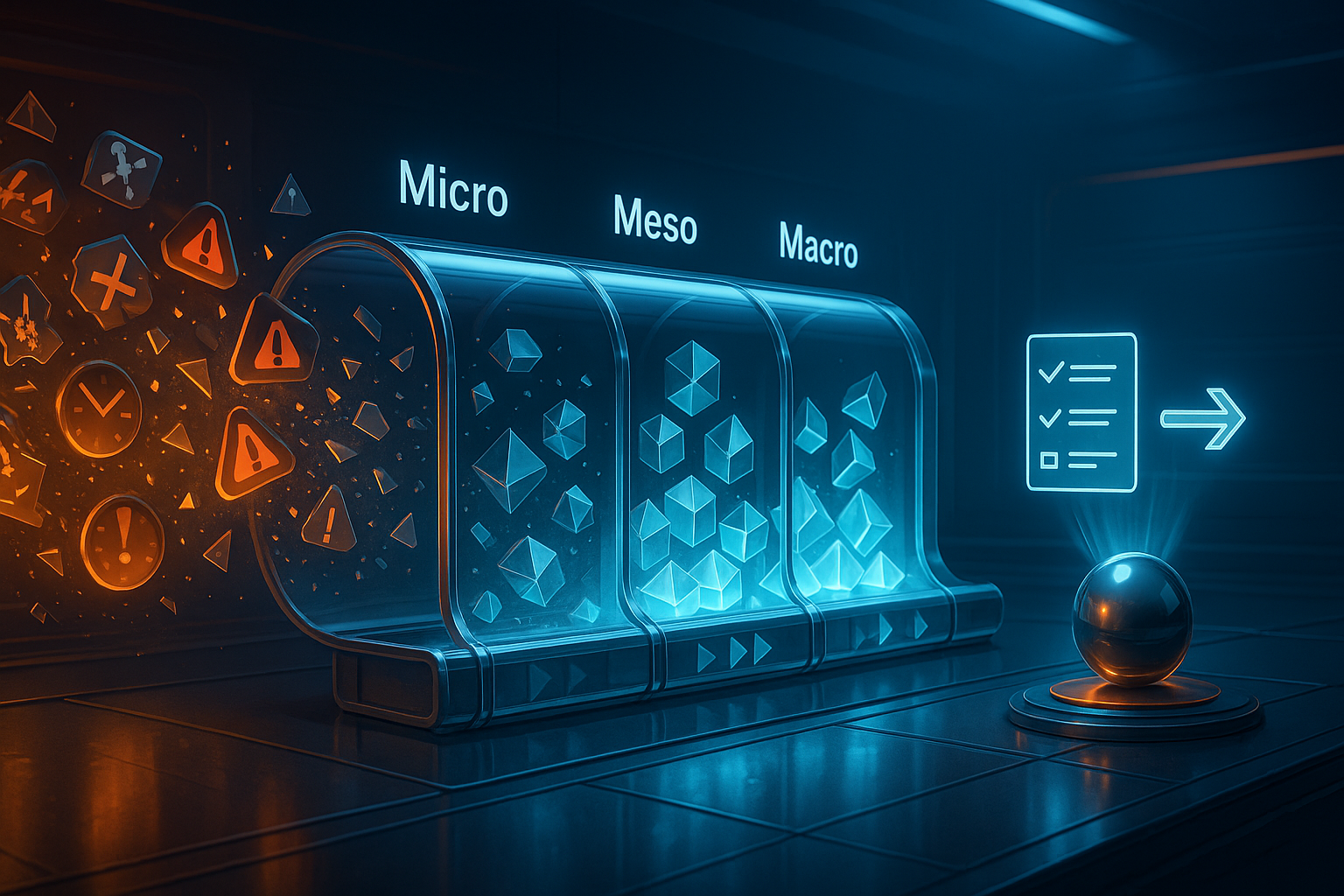

The rise of AI agents—large language models (LLMs) equipped with tool use, file access, and code execution—has been breathtaking. But with that power has come a blind spot: security. If a model can read your local files, fetch data online, and run code, what prevents it from being hijacked? Until now, not much. A new paper, Securing AI Agent Execution (Bühler et al., 2025), introduces AgentBound, a framework designed to give AI agents what every other computing platform already has—permissions, isolation, and accountability. Think of it as the Android permission model for the Model Context Protocol (MCP), the standard interface that allows agents to interact with external servers, APIs, and data. ...