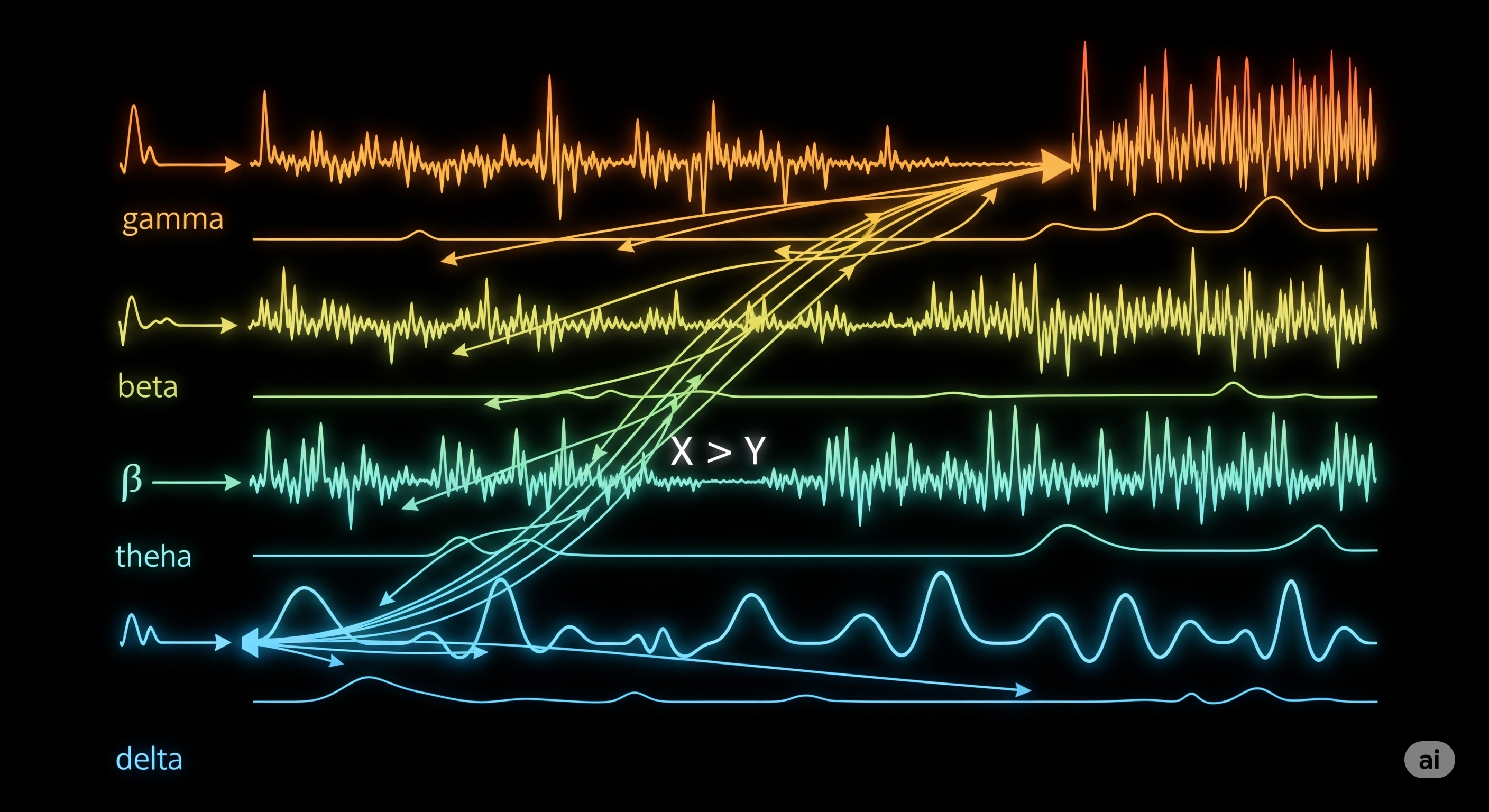

Causality in Stereo: How Multi-Band Granger Unveils Frequency-Specific Influence

Causality is rarely one-size-fits-all—especially in the dynamic world of time series data. Whether you’re analyzing brainwaves, financial markets, or industrial processes, the timing of influence and the frequency at which it occurs both matter. Traditional Granger causality assumes a fixed temporal lag, while Variable-Lag Granger Causality (VLGC) brings some flexibility by allowing dynamic time alignment. But even VLGC falls short of capturing frequency-specific causal dynamics, which are ubiquitous in complex systems. ...