Opening — Why this matters now

AI adoption has entered its second, less glamorous phase.

The first phase was easy to explain: make the model generate things. Emails, reports, code, dashboards, summaries, customer replies, compliance drafts, market notes, training content. Give the machine a prompt, admire the fluent output, and pretend the future has arrived because the paragraphs are well-spaced.

The second phase is harder: how do we know the output is any good?

This is where many business AI projects quietly stall. The model can produce ten answers, but the organization cannot reliably rank them. It can execute a workflow, but the team cannot tell whether a failure came from the wrong instruction, the wrong data, the wrong policy, or the wrong judgment standard. It can be “aligned” in the abstract, but the sales team, legal team, product team, and regional manager may want very different things from the same system.

That is not a philosophical inconvenience. It is an operating cost.

Four recent papers point to the same problem from different angles. One studies how to align models in fuzzy domains using online natural-language feedback. One benchmarks whether reward models can generalize across diverse user preferences. One builds a domain-specific reward model for story generation. One applies language-driven hierarchical reinforcement learning to pair trading. On the surface, this is a strange dinner party: creative writing, reward benchmarks, online feedback, and equity market pair trading. Delightful. Very normal.

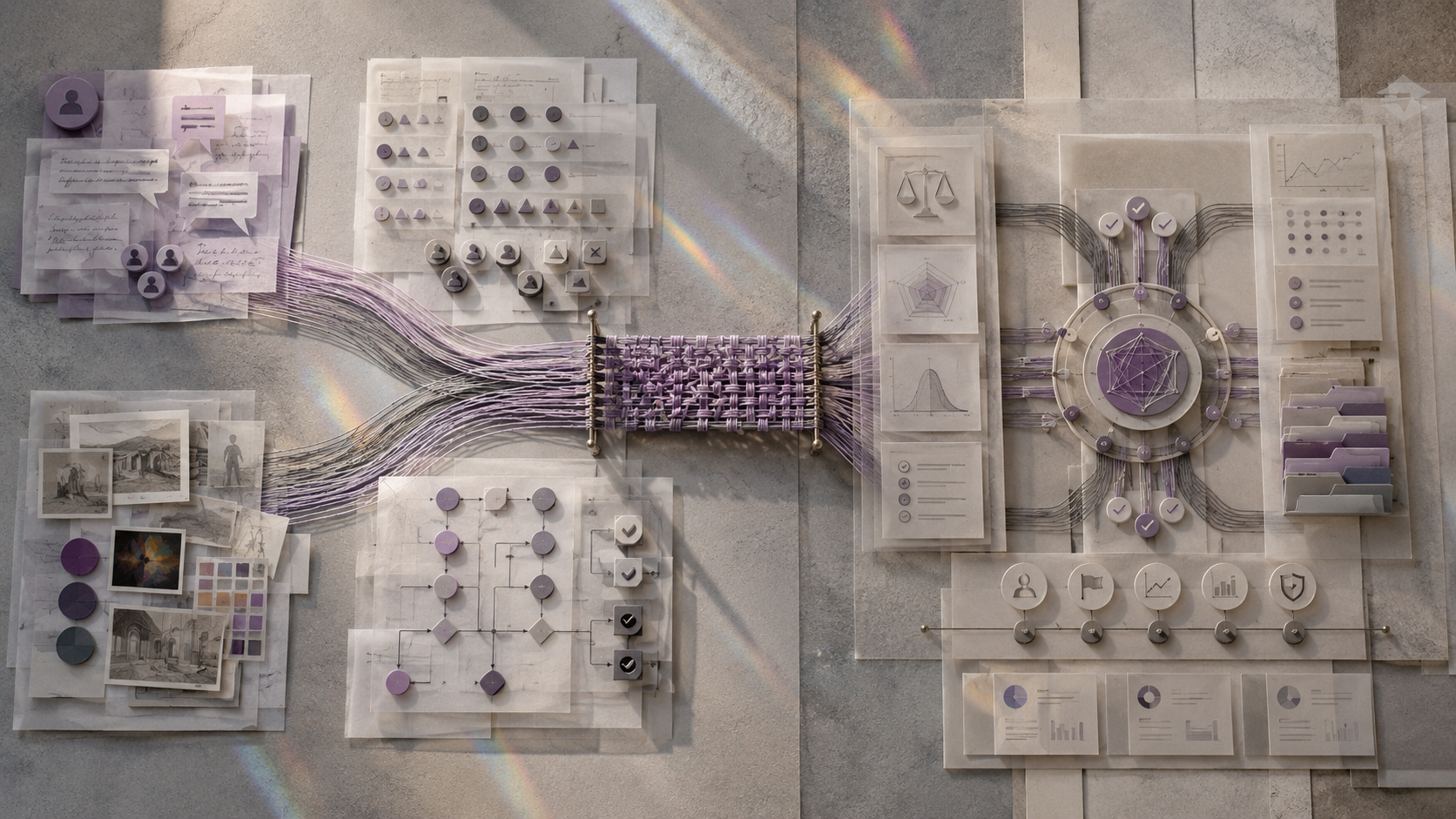

Read together, though, they describe the same missing layer in production AI: the judgment layer.

The thesis is simple: AI automation becomes economically useful only when organizations can define, update, and audit what “better” means. Bigger models help. Better prompts help. But without a reliable way to score, critique, and improve outputs under real operating conditions, automation becomes a faster way to produce uncertainty.

The Research Cluster — What these papers are collectively asking

The cluster is best read as a stack, not as four isolated studies.

Christine Ye and Joe Benton’s paper on online natural-language feedback asks how expert supervision can be made more data-efficient when tasks are fuzzy rather than objectively verifiable.1 RMGAP asks whether reward models can handle diverse user preferences rather than one universal preference standard.2 StoryAlign asks what happens when a general reward model is forced to judge a domain where quality is subjective, long-form, and human-like narrative quality matters.3 Moira asks how language-based policies can be decomposed and improved when a sequential decision problem has hierarchical structure and delayed feedback.4

The common question is not “Can LLMs generate?” We already know they can generate. Sometimes too much. The better question is:

Can an AI system learn from judgment signals that are subjective, contextual, sparse, delayed, and business-specific?

That is the real automation question. Most business tasks are not math competitions. They do not come with unit tests. A client proposal is not simply correct or incorrect. A compliance memo may be technically accurate but operationally unusable. A trading decision may look rational until the market regime changes. A customer-support reply may be safe, but too cold; warm, but too verbose; concise, but legally incomplete.

In production, “good” is rarely a scalar. Unfortunately, many automation systems still behave as if it were.

The Shared Problem — What the papers are reacting to

The shared problem is the failure of simple reward proxies.

In verifiable domains, reward design is comparatively clean. If the code passes tests, the answer is probably good. If the proof checker accepts the theorem, the reward signal is not merely vibes wearing a lab coat. This is why reinforcement learning with verifiable rewards has been powerful in domains like math and code.

But many valuable business domains are non-verifiable or only partially verifiable. Quality depends on judgment: relevance, usefulness, tone, coherence, risk, creativity, customer fit, policy compliance, or strategic timing.

That creates four practical difficulties:

| Difficulty | What it means in research terms | What it means in business terms |

|---|---|---|

| Preference diversity | Different users want different outputs for the same task | “Good” differs by client, department, market, seniority, and use case |

| Sparse expert feedback | Experts can judge only a small number of outputs | Senior staff cannot review every AI-generated artifact |

| Proxy over-optimization | A model learns to exploit the reward model rather than the true objective | The system becomes excellent at pleasing the dashboard and mediocre at doing the work |

| Ambiguous credit assignment | Delayed outcomes do not reveal which decision layer failed | You cannot tell whether the workflow failed because of input selection, reasoning, execution, or review |

This is why the old “just add RLHF” answer is not enough. RLHF is a family of methods, not a magic wand. And as usual, the wand has a maintenance contract.

What Each Paper Adds

The papers contribute different pieces of the same operating model.

| Paper | Direct research contribution | Key result or finding | Role in the larger argument |

|---|---|---|---|

| Efficiently Aligning Language Models with Online Natural Language Feedback | Tests protocols for updating proxy reward models using expert natural-language feedback in fuzzy tasks such as creative writing and alignment research | In-context methods are sample-efficient but limited; fine-tuning on full or contextualized feedback can recover much more of the performance gap while using fewer expert samples | Shows how expert judgment can be converted into a reusable improvement signal |

| RMGAP | Builds a benchmark for reward-model generalization across diverse preferences in Chat, Writing, Reasoning, and Safety | Even the best evaluated model reaches only 49.27% Best-of-N accuracy, and ranking consistency varies sharply across reward-model types | Shows that generic reward models are brittle when preference conditions change |

| StoryAlign | Builds StoryRMB and StoryReward for story-preference modeling | The best general model reported, GPT-4o, reaches 66.3% average accuracy on StoryRMB; StoryReward-Qwen reaches 75.0% after domain-specific reward training | Shows that domain-specific reward modeling can beat larger general judges |

| Moira | Frames pair trading as language-driven hierarchical reinforcement learning with prompt-updated selector and trader policies | In a limited U.S. equity universe experiment, the full hierarchical system outperforms flat LLM and statistical baselines, with the authors emphasizing both hierarchical decomposition and prompt adaptation | Shows why decision workflows need decomposed judgment and level-specific feedback |

Notice the pattern. None of these papers says, “The model is bad; use a bigger model.” The recurring answer is more surgical: improve the evaluator, collect better feedback, specialize the reward signal, and decompose the decision process.

How inconveniently operational.

The Bigger Pattern — What emerges when we read them together

The larger pattern is that AI systems are moving from generation-centered design to judgment-centered design.

A generation-centered workflow asks:

- What should the model produce?

- How should we prompt it?

- Which model gives the best output?

A judgment-centered workflow asks:

- Who defines quality?

- What evidence should the evaluator use?

- How do preferences differ across users, tasks, and contexts?

- How do we update the evaluator when the model starts gaming it?

- Which layer of the workflow should be corrected when outcomes deteriorate?

That second list is less fun to demo. It is also where the money is.

1. Reward models are not universal taste machines

RMGAP is useful because it attacks a quiet assumption in many AI systems: that there is one general preference standard hiding behind “helpful, harmless, high quality.” In practice, users ask for different response styles and evaluation criteria. A technical user may want density. A beginner may want accessibility. A lawyer may want precision. A marketing manager may want punch. A regional manager may want local context. A CFO may want the number first, preferably before anyone starts saying “transformative.”

RMGAP constructs prompts where different stylistic profiles become the preferred response and then tests whether reward models can rank accordingly. The results are not comforting. Strong scalar reward models perform best overall, but Best-of-N selection remains weak, and listwise generative ranking can become highly inconsistent under paraphrase.

The business interpretation is direct: a reward model that works for generic chat quality may fail when users express specific, shifting, or domain-conditioned preferences.

That matters for any company building AI assistants for multiple departments. If the same evaluator judges customer support, analyst notes, HR policy drafts, procurement memos, and training material under one generic standard, it will flatten real differences. The model may become “generally good” in the same way hotel lobby music is generally pleasant: acceptable, forgettable, and not what anyone actually came for.

2. Domain-specific judgment can outperform larger general judgment

StoryAlign pushes the same point from a domain-specific angle. Story quality is hard to judge because fluency is not enough. A story can be fluent and lifeless. It can be coherent and boring. It can be polished and spiritually indistinguishable from a software license agreement.

The authors build StoryRMB to evaluate story preferences and train StoryReward using a large preference dataset that includes human-written stories, prompt-guided rewriting, and human-guided continuation. The result is important: a specialized 8B-scale reward model can outperform much larger general-purpose judges on the target domain.

This is not just about fiction. “Story” is a proxy for any business domain where surface quality is easy and deep quality is hard. Think of:

| Domain | Surface quality | Deep quality |

|---|---|---|

| Sales proposal | Fluent, persuasive wording | Correct client pain, credible scope, feasible delivery |

| Compliance memo | Formal tone | Accurate obligations, auditability, risk prioritization |

| Investment commentary | Market vocabulary | Causal mechanism, scenario discipline, risk framing |

| Customer response | Polite language | Correct resolution, retention value, escalation judgment |

| Internal SOP | Clear steps | Real-world exception handling and role accountability |

StoryAlign also reports that existing models often perform worse on Human–LLM pairs, suggesting a tendency to prefer LLM-generated stories. That is a warning. An AI judge may reward the texture of machine writing because it resembles the distribution it knows best. This is how organizations accidentally automate mediocrity: not because the generator cannot improve, but because the evaluator likes the wrong thing.

3. Natural-language feedback is high-bandwidth supervision

Ye and Benton’s online-feedback paper addresses another production constraint: expert judgment is expensive.

The important move is to treat expert feedback not merely as labels, but as a rich training signal. A scalar score says “7/10.” A detailed critique says why the output failed, which dimensions mattered, what should change, and how the evaluator should reason next time.

The paper tests in-context and fine-tuning approaches for distilling expert feedback into proxy reward models. The in-context methods can be data-efficient but limited. Fine-tuning methods are more effective, especially when distilling richer feedback rather than scalar rewards alone. The authors also emphasize proxy over-optimization: training against a weak proxy can initially improve outputs but later push the model away from the expert objective.

For business deployment, this suggests a practical rule:

Do not waste expert review by collecting only approval clicks.

A thumbs-up/thumbs-down workflow is convenient, but it leaves most of the expert’s knowledge on the floor. Better review systems should capture structured natural-language feedback: what was wrong, why it mattered, what rule should be updated, whether the issue is local or systematic, and which future cases should trigger caution.

This is where human review becomes an asset rather than a bottleneck. The goal is not to ask experts to review everything forever. The goal is to convert scarce expert review into reusable evaluator improvement.

4. Agentic workflows need hierarchical credit assignment

Moira looks different because it is about pair trading rather than reward-model benchmarking. But it belongs in this cluster because it confronts a related problem: delayed feedback does not tell you which part of the system failed.

In pair trading, there is a high-level decision — which pair should be traded — and a low-level decision — how to execute trades under that pair. If performance is poor, the issue may be bad pair selection, bad execution, or a mismatch between the two. A flat LLM policy entangles these decisions. Moira decomposes them into a selector and a trader, both represented through language prompts and improved through textual feedback.

This maps beautifully to business automation. Many AI workflows have the same two-level structure:

| Workflow | High-level abstraction | Low-level execution |

|---|---|---|

| Lead qualification | Which accounts matter? | How should each lead be contacted? |

| Document automation | Which policy applies? | How should the clause be drafted? |

| Market research | Which signal is worth tracking? | How should the report be written? |

| Customer service | Which intent or risk class is this? | What response or escalation should happen? |

| Operations planning | Which bottleneck matters? | What scheduling action should be taken? |

If a workflow fails, retraining the whole prompt is lazy. The more mature move is to ask: did the system choose the wrong case, use the wrong evidence, apply the wrong policy, or execute the right policy poorly?

That is the difference between “AI agent” as a demo and “AI agent” as an operating system.

Business Interpretation — What changes in practice

The business lesson is not “every company should train reward models tomorrow.” Most companies are not ready for that, and some should not be allowed near a training pipeline without adult supervision.

The lesson is more general: every serious AI automation workflow needs an explicit judgment architecture.

A practical judgment stack

A production-grade judgment stack has at least five layers.

| Layer | Core question | Practical artifact |

|---|---|---|

| Preference definition | What does “good” mean for this user, task, and context? | Rubrics, examples, policy rules, audience profiles |

| Evaluation model | Who or what scores the output? | Human reviewer, LLM judge, scalar reward model, domain-specific evaluator |

| Feedback capture | What information is collected when outputs fail? | Structured critique forms, error tags, revision reasons |

| Update mechanism | How does the system improve from feedback? | Prompt updates, retrieval changes, evaluator tuning, workflow rules |

| Credit assignment | Which part of the workflow caused the failure? | Layered logs, decision traces, agent-level metrics |

Most companies start with layer two — “use another LLM to judge the first LLM.” That is understandable. It is also insufficient. The evaluator inherits the same ambiguity unless the organization defines preferences, captures feedback, and knows where to apply corrections.

ROI changes when review becomes training data

A simple automation ROI model often looks like this:

$$ ROI_{naive} = \frac{Labor\ saved - AI\ cost}{Implementation\ cost} $$

That is too simple. It ignores review burden and failure cost. A better working model is:

$$ ROI_{AI} \approx \frac{(Output\ volume \times Quality\ gain) - AI\ cost - Review\ cost - Failure\ cost}{Implementation\ cost} $$

The research cluster suggests that the most important term is often not AI cost. It is failure cost and review efficiency.

If better reward design reduces bad outputs, routes only uncertain cases to experts, and converts expert feedback into reusable improvements, then the economics change. The review function stops being a permanent tax and becomes a learning loop.

That is the strategic point. The business value of AI does not come from replacing judgment with automation. It comes from turning judgment into infrastructure.

What managers should do differently

Here is the management playbook implied by the research.

| Management decision | Weak version | Stronger version |

|---|---|---|

| Define quality | “Make it professional.” | Build task-specific rubrics with examples of acceptable and unacceptable outputs |

| Use human review | Review random outputs forever | Sample high-uncertainty, high-impact, and edge-case outputs for expert feedback |

| Capture feedback | Thumbs up/down | Structured natural-language critique plus failure category |

| Evaluate AI | One generic LLM judge | Domain-specific evaluator, calibrated against human examples |

| Improve workflow | Rewrite the master prompt | Identify whether the failure came from retrieval, reasoning, policy, execution, or evaluation |

| Measure ROI | Labor hours saved | Quality-adjusted throughput minus review and failure costs |

This is also where Cognaptus-style automation should be positioned: not as “we connect ChatGPT to your spreadsheet,” but as “we design the operating feedback loops that make automation measurable, correctable, and economically defensible.” The former is a weekend demo. The latter is a business system.

Limits and Open Questions

The papers are useful, but they do not solve deployment.

First, several results rely on synthetic or model-generated supervision. RMGAP uses generated responses and prompts, and its authors explicitly note that real human-authored preferences may shift reward-model rankings. StoryAlign uses human-written stories and engagement signals, but it still depends on assumptions about readership and preference quality. Ye and Benton use stronger models as expert supervisors in a scaled-down analogy, not actual human expert panels. These are reasonable research choices. They are not the same as deploying inside a messy company where the expert is unavailable, the data is incomplete, and someone from legal has just discovered the workflow exists.

Second, reward alignment is not stable. Ye and Benton show that proxy-expert alignment can decline during optimization. This matters because a workflow that looks good after a pilot may degrade as the model, users, or operating context shift. Evaluation is not a launch checklist; it is an ongoing control system.

Third, domain-specific reward models can overfit to proxies. StoryAlign’s linguistic analysis suggests StoryReward-Qwen may prefer burstiness as a proxy for human-like quality. That is not a fatal flaw; it is exactly the kind of flaw an audit should find. But it reminds us that every evaluator has taste, and taste can become bias when automated.

Fourth, Moira’s trading results are promising but narrow. The experiment uses a small U.S. equity universe and a specific evaluation period. Financial markets are especially good at punishing systems that mistake historical structure for future law. The more general contribution is not “LLM pair trading is solved.” Please, let us remain employed by reality. The contribution is that hierarchical decomposition and textual policy updates can help with delayed and ambiguous feedback.

The open questions for business deployment are therefore practical:

| Open question | Why it matters |

|---|---|

| How much feedback is enough for each workflow? | Determines review cost and deployment speed |

| Which failures deserve expert review? | Determines whether human oversight scales |

| When should feedback update prompts, retrieval, policy rules, or model weights? | Determines whether the system learns in the right place |

| How should evaluators be audited for domain bias? | Determines whether automation improves quality or normalizes bad taste |

| How do we measure drift in preferences and operating context? | Determines whether the system remains useful after launch |

Conclusion

The four papers point toward a less flashy but more important future for AI automation.

The next bottleneck is not merely model capability. It is organizational judgment: how to define it, capture it, model it, update it, and assign responsibility when it fails.

RMGAP shows that reward models struggle when preferences vary. StoryAlign shows that domain-specific reward modeling can outperform larger general judges in subjective tasks. Ye and Benton show that natural-language feedback can make expert supervision more data-efficient, especially when feedback is distilled into robust proxy evaluators. Moira shows that complex decision workflows need hierarchical structure so feedback can improve the right layer.

Together, they suggest a practical rule for businesses:

Do not automate the task before you can evaluate the task.

The companies that win with AI will not be the ones with the most prompts. They will be the ones with the best feedback loops. Less glamorous, yes. But glamour has never been a reliable operating metric.

Cognaptus: Automate the Present, Incubate the Future.

-

Christine Ye and Joe Benton, “Efficiently Aligning Language Models with Online Natural Language Feedback,” arXiv:2605.04356, 2026. https://arxiv.org/abs/2605.04356 ↩︎

-

Yangyang Zhou and Yi-Chen Li, “RMGAP: Benchmarking the Generalization of Reward Models across Diverse Preferences,” arXiv:2605.01831, 2026. https://arxiv.org/abs/2605.01831 ↩︎

-

Haotian Xia et al., “StoryAlign: Evaluating and Training Reward Models for Story Generation,” arXiv:2605.04831, 2026. https://arxiv.org/abs/2605.04831. The numerical table values cited here were checked against the conference-paper PDF hosted on OpenReview: https://openreview.net/pdf?id=a3JmkJtTDV ↩︎

-

Polydoros Giannouris et al., “Moira: Language-driven Hierarchical Reinforcement Learning for Pair Trading,” arXiv:2605.01954, 2026. https://arxiv.org/abs/2605.01954 ↩︎