Context Is the New Attack Surface

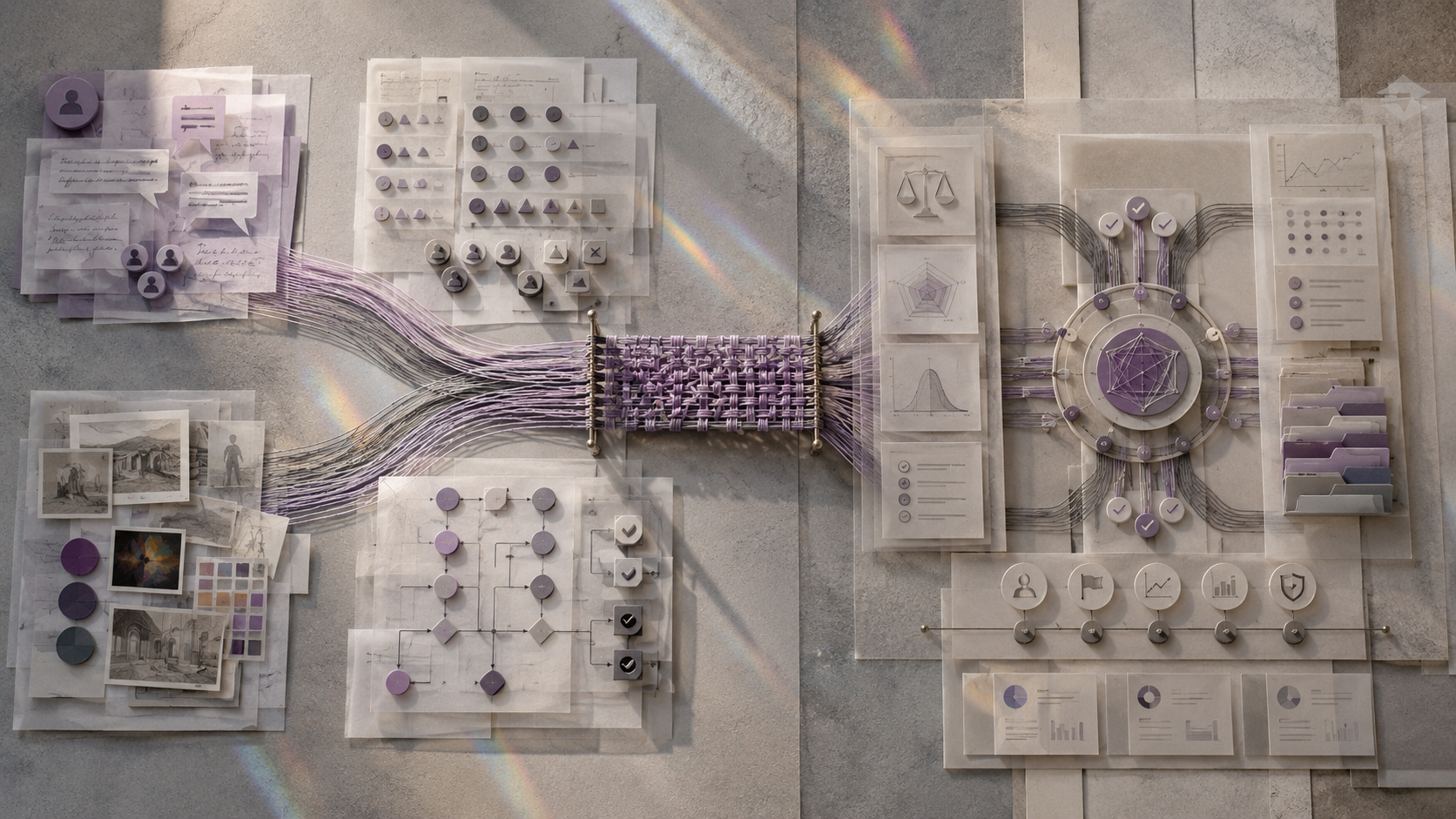

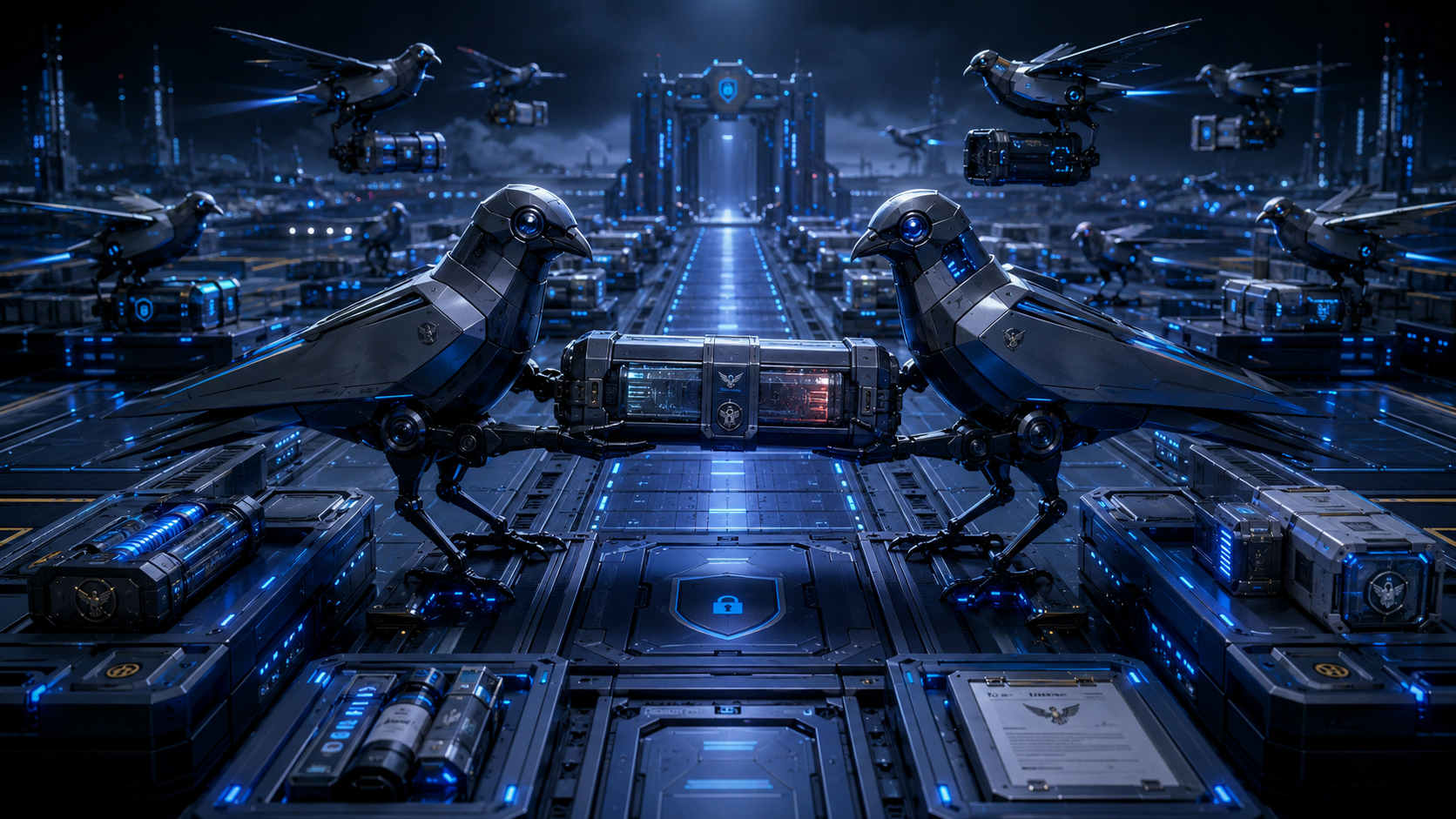

Context Is the New Attack Surface A policy can block a sentence. It has a harder time blocking a story. That is the uncomfortable lesson from Jailbreak Mimicry, a recent arXiv paper by Pavlos Ntais on automated discovery of narrative-based jailbreaks for large language models.1 The paper trains a compact attacker model to transform harmful goals into plausible narrative or functional contexts, then tests whether larger models still produce harmful output. The headline number is easy to quote: the trained attacker reaches 81.0% attack success against GPT-OSS-20B on a held-out 200-item test set. The business lesson is less flashy and more useful: safety failures may not live in the forbidden content alone. They often live in the surrounding work story that makes the request look legitimate. ...