Opening — Why this matters now

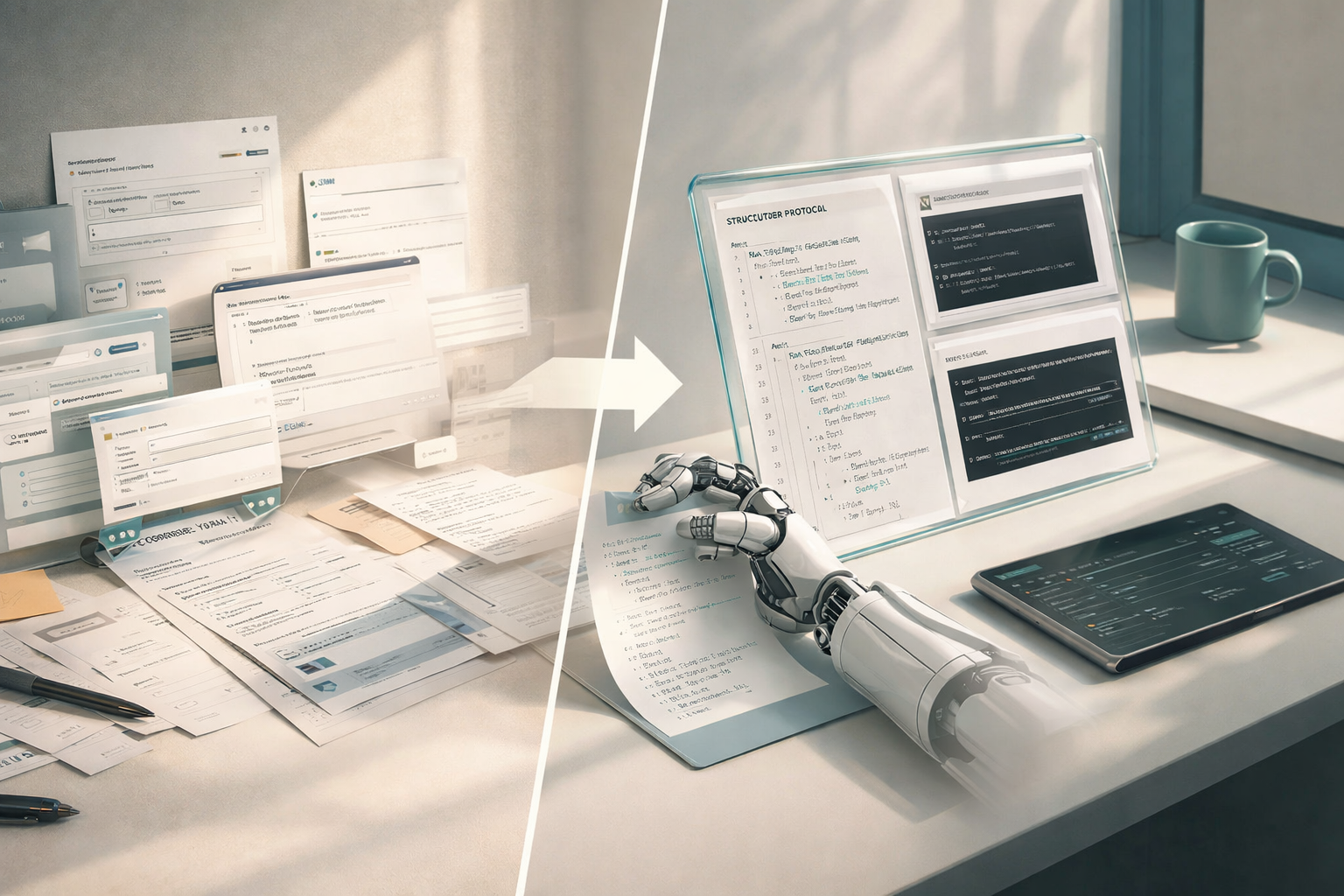

The industry is currently obsessed with what agents can do. The more uncomfortable question is: how they do it.

Most AI agent systems today operate like clever improvisers—stringing together prompts, APIs, and UI hacks into something that works most of the time. That’s acceptable for demos. It is not acceptable for production systems handling money, identity, or operations.

The paper introduces ANX (AI Native eX)—a protocol-first approach that reframes agents not as prompt-driven improvisers, but as participants in a structured, governed interaction system. It is less glamorous than new model releases. It is also far more consequential.

Background — The quiet fragmentation problem

Agent systems currently fall into three imperfect camps:

| Approach | Strength | Structural Weakness |

|---|---|---|

| GUI Automation | Works everywhere | Token-heavy, fragile, insecure |

| MCP / Tool Calling | Structured | Pre-installed, static, limited |

| Skill Systems | Reusable | Fragmented, UI-dependent |

As noted in the paper, none of these solve all four critical dimensions simultaneously: efficiency, discovery, security, and collaboration fileciteturn0file0.

The deeper issue is architectural: these systems are tool-first, not protocol-first.

Which means:

- Each tool defines its own interaction logic

- Agents must adapt constantly

- Security is bolted on, not designed in

In other words, the industry has been optimizing interfaces, not interaction models.

Analysis — What ANX actually changes

ANX introduces two core ideas that matter more than anything else in the paper:

- Structured semantics (ANX Markup)

- 3EX architecture (Expression–Exchange–Execution)

Let’s unpack both—because this is where the paper stops being theoretical and starts being useful.

1. From prompts to protocols

ANX replaces natural language instructions with a structured format called ANX Markup.

This is not just about readability. It changes execution reliability.

| Property | Natural Language | ANX Markup |

|---|---|---|

| Ambiguity | High | Minimal |

| Token Cost | High | Low |

| Machine Executability | Indirect | Direct |

| SOP Reliability | Fragile | Deterministic |

The paper explicitly argues that natural language—even with techniques like Chain-of-Thought—cannot eliminate ambiguity for multi-step workflows fileciteturn0file0.

ANX’s response is simple: stop relying on language where structure is required.

Subtle, but decisive.

2. The 3EX architecture: separating concerns (finally)

Most agent systems mix everything together:

- Task definition

- Tool discovery

- Execution

ANX splits them into three layers:

| Layer | Function | Business Meaning |

|---|---|---|

| Expression | Define task (ANX Markup) | Standardized intent |

| Exchange | Discover tools (ANXHub) | Dynamic marketplace |

| Execution | Run commands (Core + CLI) | Reliable operations |

This decoupling does two things:

- Reduces token overhead (only relevant information flows)

- Lowers agent cognitive load (no mixed responsibilities)

Or, more bluntly: it removes unnecessary thinking from the agent.

3. Security as architecture, not policy

This is where ANX quietly outclasses most current systems.

Instead of encrypting data or adding confirmation prompts, ANX redesigns the interaction path itself:

- Sensitive inputs go UI → Core, bypassing the LLM entirely

- The agent only receives reference tokens, not raw data

- Critical actions require human-only confirmation with no programmatic bypass fileciteturn0file0

This is not a patch. It is a constraint baked into the system.

| Security Model | LLM Sees Sensitive Data? | Bypass Risk |

|---|---|---|

| Typical Agents | Yes | High |

| Encrypted Agents | Yes (before encryption) | Medium |

| ANX | No | Structurally prevented |

If you are building anything financial, regulated, or identity-related—this difference is not optional.

4. SOPs become executable systems

One of the more underrated contributions is machine-executable SOPs.

Instead of describing workflows in documents or prompts, ANX encodes them directly:

- Explicit dependencies (

sources) - Conditional routing (

targets) - Deterministic execution

The result:

| Feature | Traditional SOP | ANX SOP |

|---|---|---|

| Interpretation | Human / LLM | System |

| Determinism | Low | High |

| Multi-agent support | Ad-hoc | Native |

| Auditability | Weak | Built-in |

The resume-screening case in the paper shows how agents and humans coordinate under a single workflow without ambiguity (pages 14–15) fileciteturn0file0.

This is not automation.

This is operational infrastructure.

Findings — What actually improves

The paper provides empirical results on a controlled form-filling task.

Efficiency gains

| Method | Task Tokens (GPT-4o) | Execution Time (s) |

|---|---|---|

| GUI | 8.3k | 48.2 |

| MCP | 6.3k | 39.0 |

| ANX | 2.8k | 16.5 |

Relative improvements

| Metric | Improvement vs MCP | Improvement vs GUI |

|---|---|---|

| Token Reduction | ~55.6% | ~66.3% |

| Time Reduction | ~57% | ~65% |

The reason is not model optimization—it’s architectural.

ANX moves work out of the LLM context and into the execution layer.

Less thinking. More doing.

Predictably faster.

Implications — Where this actually matters

This paper is not about building better demos.

It is about making agents viable in environments that care about:

1. Cost

Token efficiency directly translates to operational cost.

If your agent runs millions of tasks, a 50–60% reduction is not an improvement—it is a different business model.

2. Compliance

ANX’s LLM-blind sensitive data handling aligns naturally with:

- Financial regulations

- Data privacy laws

- Enterprise security policies

Most current agent frameworks do not.

3. Scalability

The ANXHub concept—a dynamic, zero-install marketplace—points toward a future where:

- Agents do not “install tools”

- They discover and compose capabilities in real time

This is closer to how the internet works than how software works today.

4. Human control (without friction)

ANX does something rare: it keeps humans in the loop only where it matters.

- High-confidence tasks → automated

- Boundary cases → human validation

Not everything needs a human. But the important things do.

Conclusion — The shift you probably underestimated

The industry keeps asking:

“How do we make agents smarter?”

ANX quietly answers a different question:

“How do we make agents reliable systems?”

Protocol-first design is not exciting. It does not trend on X.

But it is how messy, probabilistic intelligence becomes something businesses can actually deploy.

And once that shift happens, the bottleneck is no longer model capability.

It is architecture.

Cognaptus: Automate the Present, Incubate the Future.