Opening — Why This Matters Now

Medical AI has entered its confident phase. Vision-language models can now look at a chest X-ray and produce impressively fluent explanations. The problem? Fluency is not fidelity.

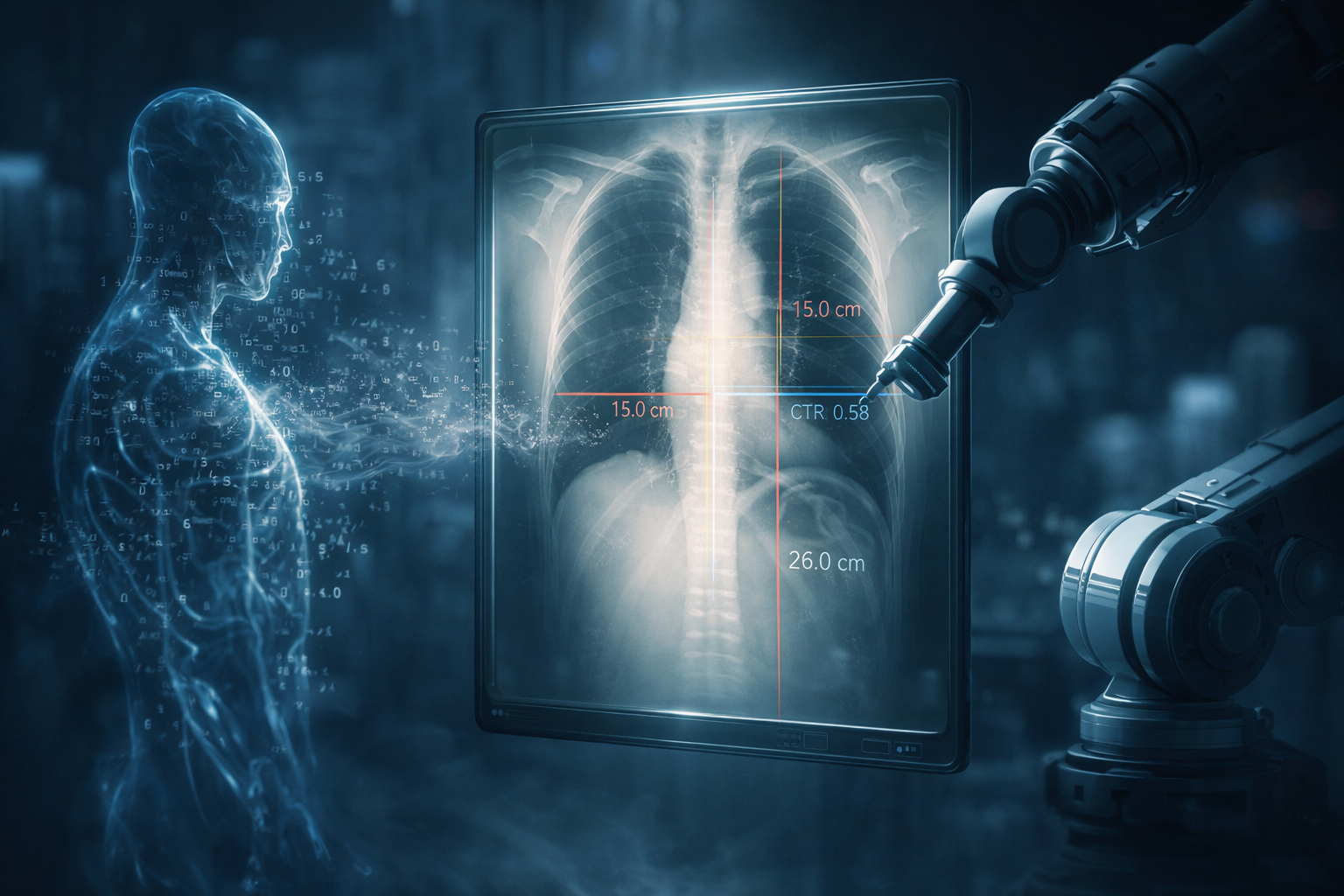

In safety-critical domains like radiology, sounding correct is not the same as being correct — and it certainly isn’t the same as being verifiable. When an AI claims cardiomegaly, clinicians don’t want poetry. They want the cardiothoracic ratio (CTR), the measurement boundaries, and ideally, the overlay drawn directly on the image.

The recent work on CXReasonAgent reframes the conversation: instead of scaling models to hallucinate more persuasively, integrate clinically grounded tools so the reasoning is anchored in extractable, deterministic evidence.

This is not about bigger models. It is about accountable reasoning.

Background — The Limits of LVLM Confidence

Large Vision-Language Models (LVLMs) have demonstrated strong performance in multimodal reasoning. Yet multiple studies show a recurring weakness in medical contexts:

- Responses appear plausible but are not grounded in image-derived evidence.

- Explanations are textual only, without verifiable measurement overlays.

- Extending to new diagnostic tasks requires retraining or fine-tuning.

In clinical imaging, diagnostic reasoning is inherently multi-step:

- Identify anatomical regions.

- Extract quantitative measurements or spatial observations.

- Apply diagnostic criteria.

- Produce a conclusion.

Most LVLM pipelines collapse this into a single generative step.

The result? High coverage, low faithfulness.

Which is a polite way of saying: it sounds right, but you cannot audit it.

Analysis — What CXReasonAgent Actually Does Differently

CXReasonAgent integrates a large language model with clinically grounded diagnostic tools. The architecture has three stages:

1. Query Interpretation & Tool Planning

The agent classifies each user request into:

- Diagnostic Evidence Request (e.g., “What is the cardiothoracic ratio?”)

- Visual Evidence Request (e.g., “Can you show the measurement overlay?”)

It then selects the appropriate diagnostic tool.

2. Clinically Grounded Tool Execution

The diagnostic tool (based on CheXStruct) performs deterministic, rule-based geometric computations derived from radiologist-defined criteria.

Outputs include:

| Evidence Type | Example Output |

|---|---|

| Quantitative measurement | CTR = 0.42 |

| Spatial observation | Trachea midline alignment |

| Diagnostic criterion | Cardiomegaly threshold = 0.50 |

| Visual evidence | Annotated image with boundary overlays |

Crucially, the extraction is deterministic. Given the same image, the evidence is reproducible.

3. Evidence-Grounded Response Generation

The LLM does not access the raw image at response time.

It must generate its answer solely from structured diagnostic evidence returned by tools.

This design enforces grounding by construction.

No evidence, no conclusion.

CXReasonDial — Measuring Grounded Dialogue

To evaluate multi-turn reasoning, the authors introduce CXReasonDial, a benchmark with 1,946 dialogues across 12 diagnostic tasks.

Dialogue structures vary:

- Single-task

- Multi-task

- Global-to-task exploration

And follow three questioning flows:

- Top-down (conclusion → evidence)

- Bottom-up (evidence → conclusion)

- Random

Dialogue Statistics

| Statistic | Value |

|---|---|

| Total Dialogues | 1,946 |

| Single-task | 1,200 |

| Multi-task | 660 |

| Global-to-task | 86 |

| Avg. Turns per Dialogue | 10.87 |

This matters because evidence-grounded reasoning must remain coherent across turns — especially when users challenge or request verification.

Findings — Faithfulness Beats Fluency

The results are revealing.

Turn-Level Performance (Dynamic User Setting)

| Model | Faithfulness ↑ | Hallucination ↓ | Strict Dialogue Success ↑ |

|---|---|---|---|

| CXReasonAgent (GPT-5 mini) | 99.8% | 0.2% | 85.8% |

| CXReasonAgent (Gemini-3-Flash) | 99.9% | 0.1% | 75.7% |

| LVLM (Gemini-3-Flash baseline) | 46.3% | 52.3% | 9.1% |

| LVLM (Pixtral-Large) | 48.2% | 50.3% | 7.7% |

Two observations stand out:

- LVLMs maintain high coverage but collapse on faithfulness.

- Even small backbones (e.g., Qwen 4B/8B) outperform all LVLM baselines when embedded in the tool-grounded agent framework.

The architectural choice matters more than model scale.

This is an uncomfortable truth for the “just scale it” camp.

Robustness Across Evaluation Settings

The study evaluates three settings:

- Without Ground Truth History — model errors propagate.

- With Ground Truth History — upper-bound scenario.

- Dynamic User Simulator — adaptive user queries.

CXReasonAgent maintains strong grounding even when interacting dynamically.

LVLMs, in contrast, improve dramatically when given corrected dialogue history — suggesting they opportunistically reuse prior text rather than consistently grounding in image evidence.

In real deployments, you don’t get a ground-truth safety net.

Implications — Beyond Radiology

The broader message is strategic:

1. Agent Design > Model Size

Evidence-grounded architecture delivers larger gains than parameter scaling.

For enterprises, this means:

- Lower compute costs

- Modular task expansion

- Clear audit trails

2. Deterministic Tools Enable Governance

In regulated environments (healthcare, finance, compliance), deterministic intermediate steps are not optional.

They are infrastructure.

3. Multi-Turn Grounding Is the Real Test

Single-shot benchmarks overestimate capability.

If your AI cannot maintain evidence consistency across dialogue turns, it will eventually contradict itself — or worse, mislead confidently.

4. Scalable Extensibility

Adding new diagnostic tasks does not require retraining the backbone. One integrates new tools.

This is a software engineering advantage, not just a modeling trick.

A Conceptual Shift: From Generative to Accountable AI

CXReasonAgent embodies a subtle but critical shift:

Stop asking models to be radiologists. Ask them to orchestrate radiology tools.

The LLM becomes:

- Planner

- Interpreter

- Dialogue coordinator

Not the measurement engine.

This separation of concerns is what enables reliability.

In safety-critical systems, accountability scales better than eloquence.

Conclusion — The Future Is Tool-Grounded

CXReasonAgent demonstrates that trustworthy medical AI does not require larger vision-language models.

It requires:

- Deterministic evidence extraction

- Structured intermediate outputs

- Explicit visual grounding

- Multi-turn reasoning consistency

In other words, it requires architectural humility.

As AI systems move deeper into regulated domains, the winning designs will not be those that sound the smartest — but those that can show their work.

And in radiology, showing your work means drawing the line on the image.

Cognaptus: Automate the Present, Incubate the Future.