Parallel Minds, Shorter Time: ParaThinker’s Native Thought Width

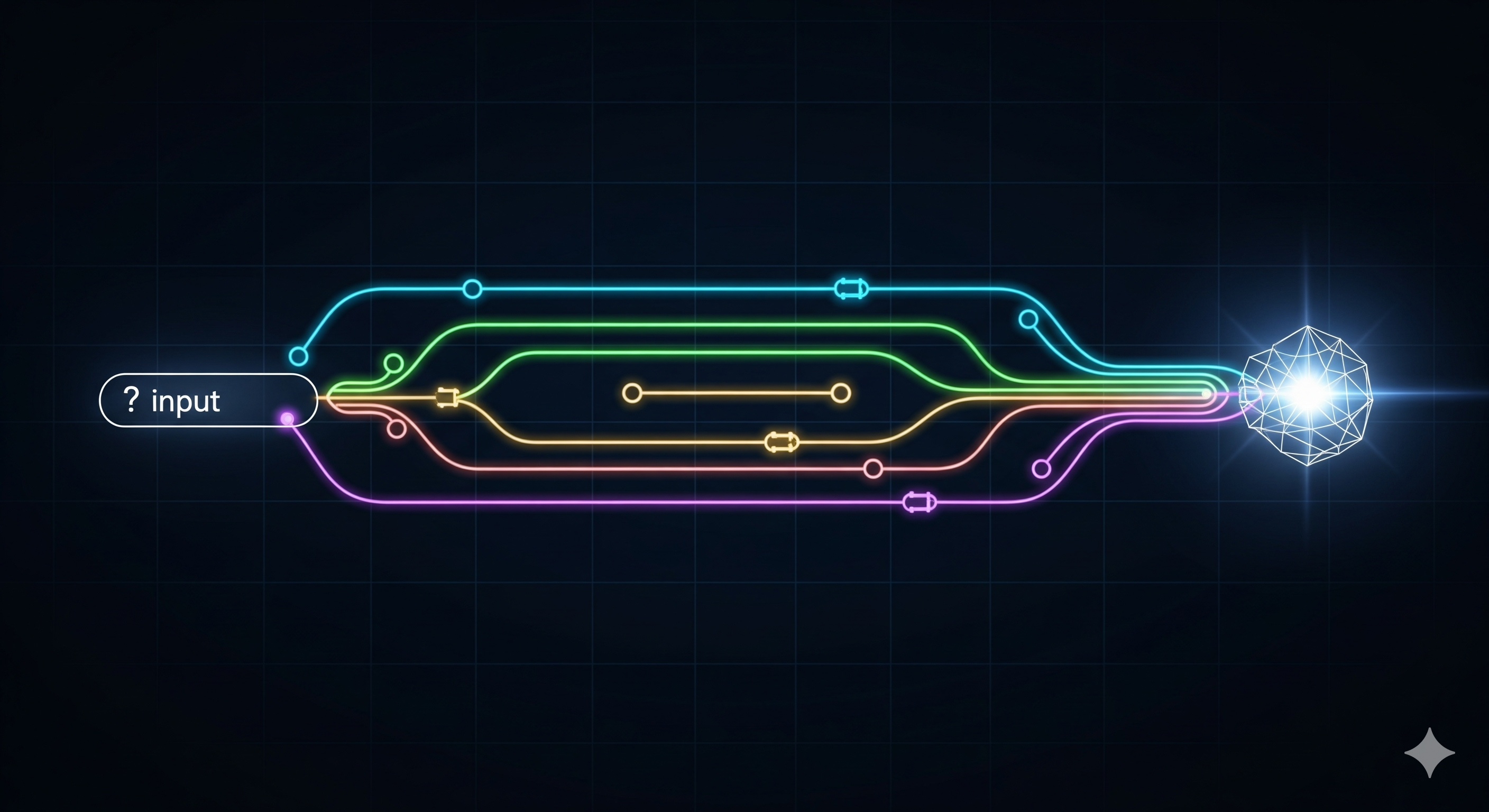

The pitch: We’ve stretched LLM “depth” by making models think longer. ParaThinker flips the axis—training models to think wider: spawn several independent lines of thought in parallel and then fuse them. The result is higher accuracy than single‑path “long thinking” at roughly the same wall‑clock time—and it scales. TL;DR for operators What it is: An end‑to‑end framework that natively generates multiple reasoning paths with special control tokens, then summarizes using cached context. Why it matters: It tackles the test‑time scaling bottleneck (aka Tunnel Vision) where early tokens lock a model into a suboptimal path. Business takeaway: You can trade a bit of GPU memory for more stable, higher‑quality answers at nearly the same latency—especially on math/logic‑heavy tasks and agentic workflows. The problem: “Think longer” hits a wall Sequential test‑time scaling (à la o1 / R1‑style longer CoT) delivers diminishing returns. After a point, more tokens don’t help; they reinforce early mistakes. ParaThinker names this failure mode Tunnel Vision—the first few tokens bias the entire trajectory. If depth traps us, width can free us. ...