When Models Remember Too Much: The Hidden Cost of Memorization

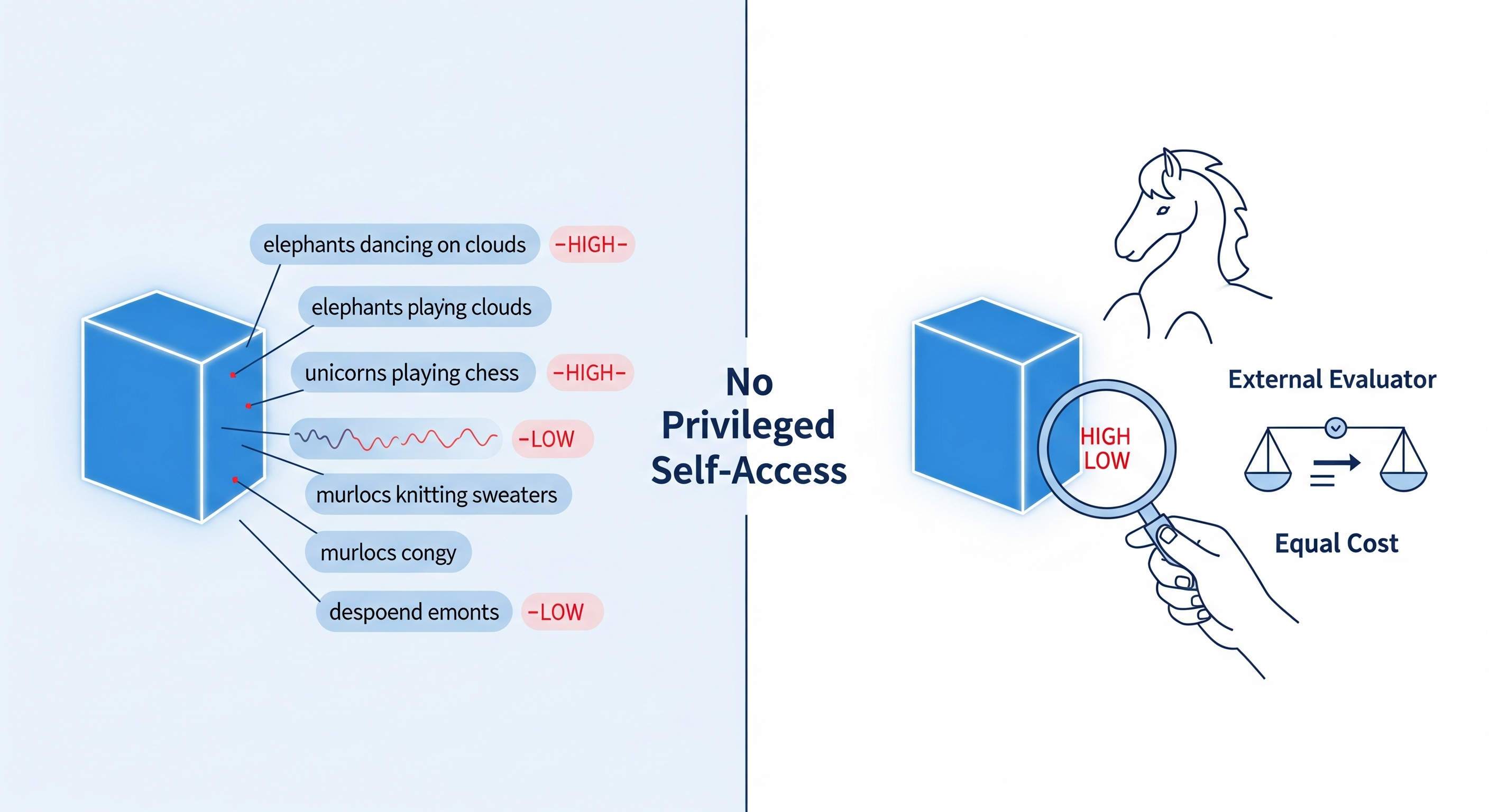

Opening — Why this matters now The industry loves to talk about generalization. We celebrate models that extrapolate, reason, and improvise. But lurking underneath this narrative is a less glamorous behavior: memorization. Not the benign kind that helps recall arithmetic, but the silent absorption of training data—verbatim, brittle, and sometimes legally radioactive. The paper behind this article asks a pointed question the AI industry has mostly tiptoed around: where, exactly, does memorization happen inside large language models—and how can we isolate it from genuine learning? ...