When 100% Sensitivity Isn’t Safety: How LLMs Fail in Real Clinical Work

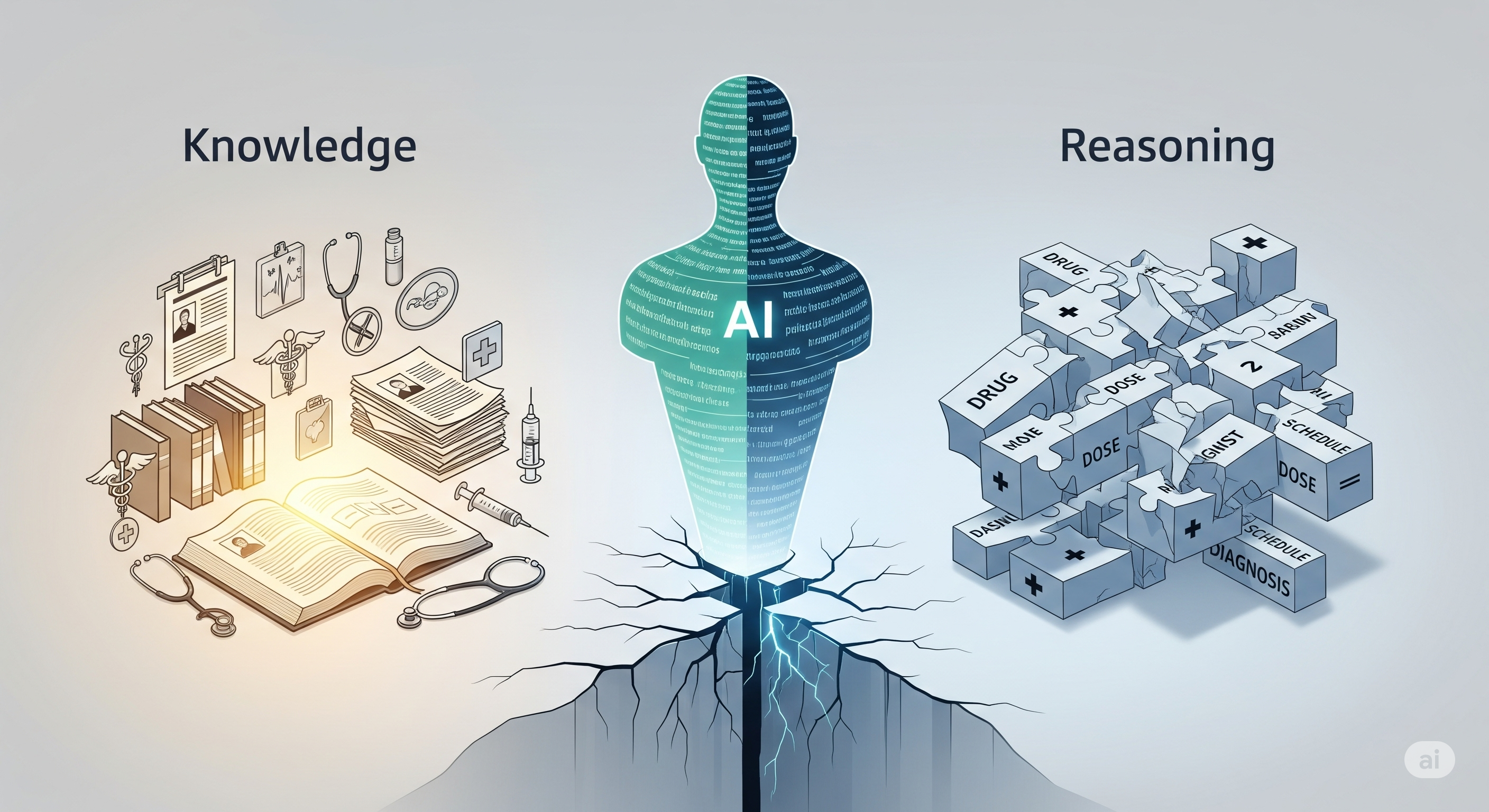

Opening — Why this matters now Healthcare AI has entered its most dangerous phase: the era where models look good enough to trust. Clinician‑level benchmark scores are routinely advertised, pilots are quietly expanding, and decision‑support tools are inching closer to unsupervised use. Yet beneath the reassuring metrics lies an uncomfortable truth — high accuracy does not equal safe reasoning. ...