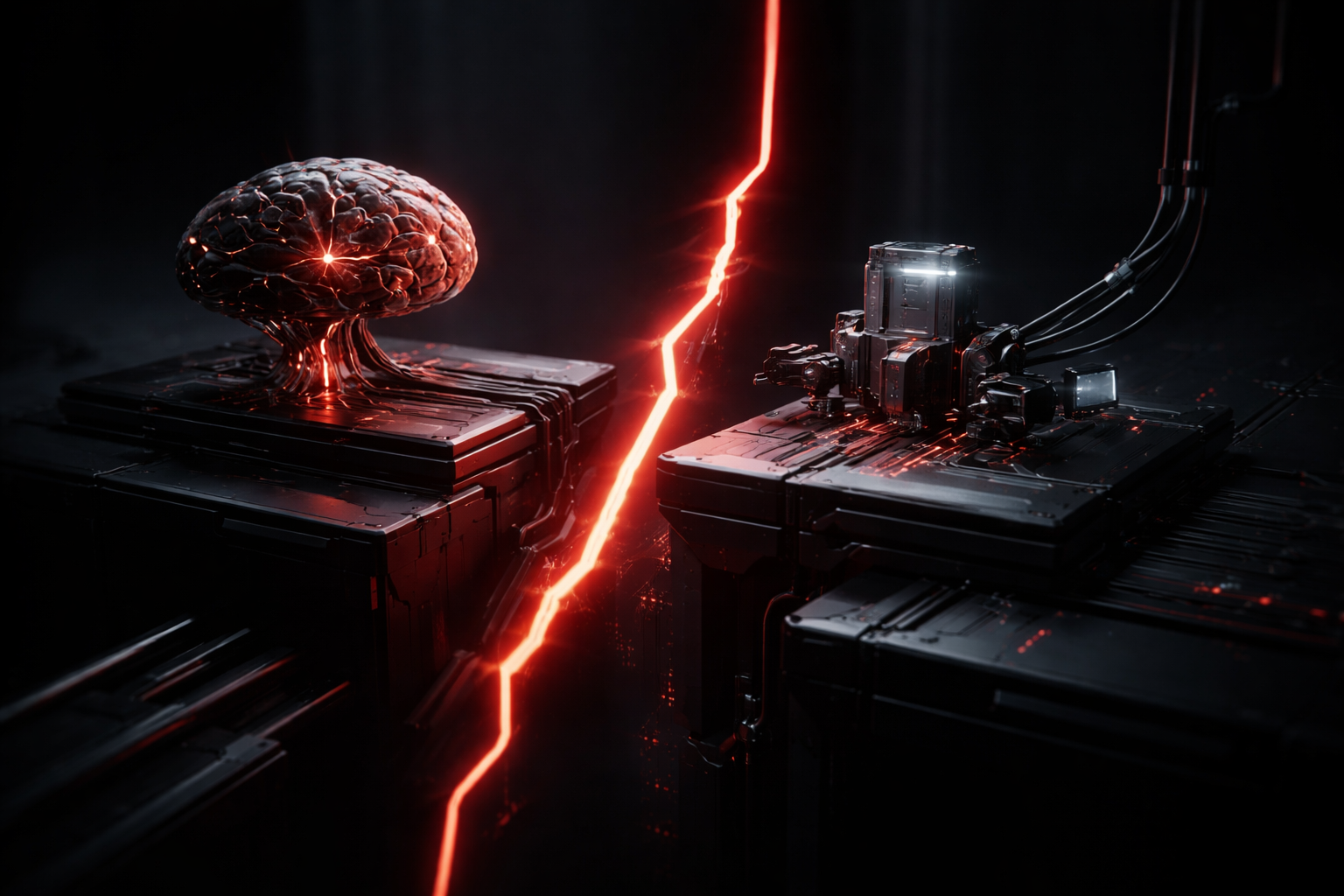

Mind the Cut: Where Your AI Strategy Quietly Breaks

Opening — Why this matters now Most companies think they are building “AI agents.” In reality, they are assembling something far more fragile: a predictive engine duct-taped to a control system. This distinction sounds academic—until your agent fails in production for reasons no one can quite explain. The recent paper “The Cartesian Cut in Agentic AI” fileciteturn0file0 offers a deceptively simple lens: where does control actually live? ...