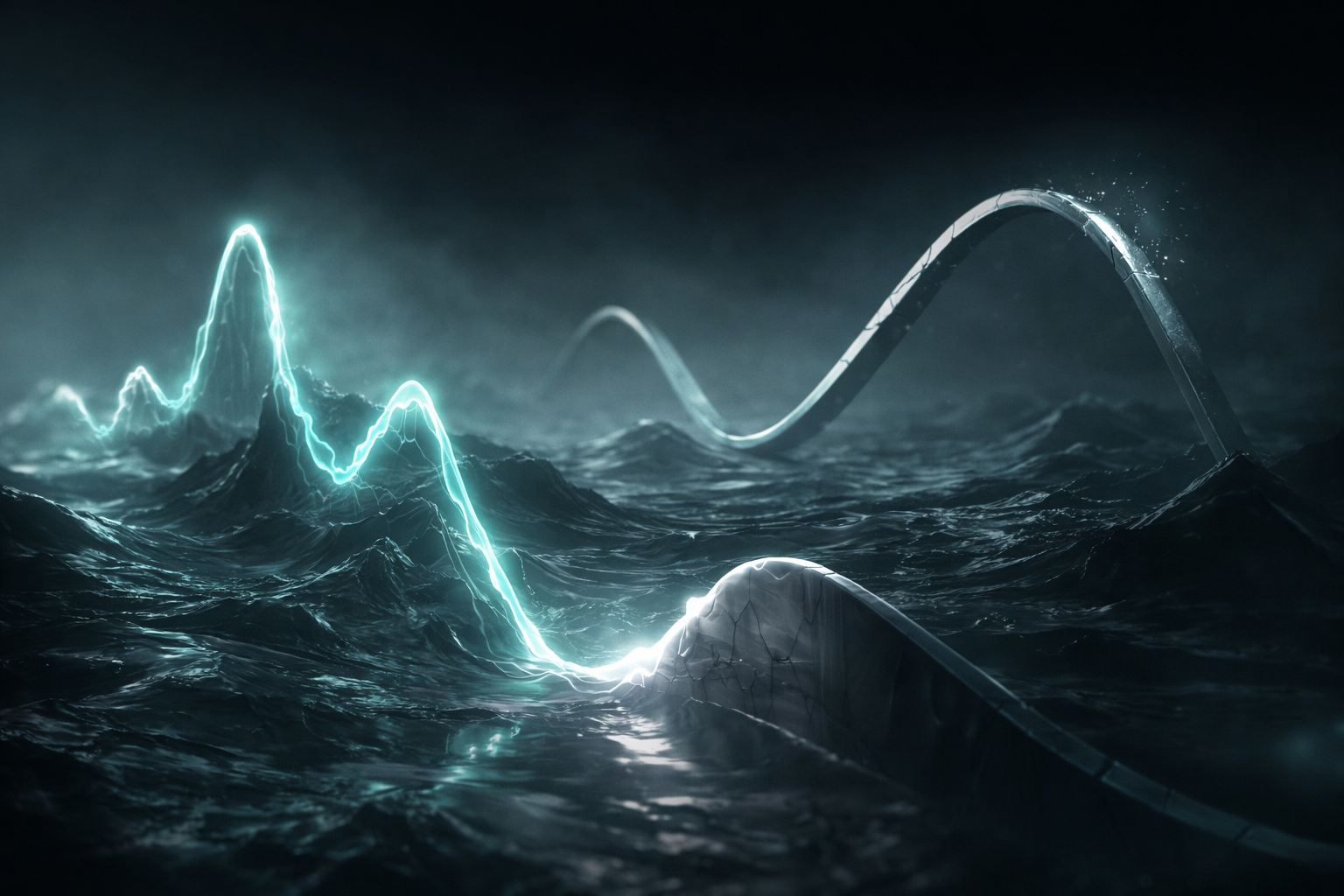

When Tokens Remember: Graphing the Ghosts in LLM Reasoning

Opening — Why this matters now Large language models don’t think—but they do accumulate influence. And that accumulation is exactly where most explainability methods quietly give up. As LLMs move from single-shot text generators into multi-step reasoners, agents, and decision-making systems, we increasingly care why an answer emerged—not just what token attended to what prompt word. Yet most attribution tools still behave as if each generation step lives in isolation. That assumption is no longer just naïve; it is actively misleading. ...