Opening — Why this matters now

The AI industry has developed a charmingly expensive habit: when models struggle with long documents, we buy them larger windows and pretend the problem has been solved. It has not.

Long-context LLMs are useful, but longer context is not the same as better context. A model can accept a very large input and still miss the crucial paragraph buried in the middle, over-attend to duplicated evidence, or lose the argumentative spine of a document. The result is familiar to anyone building AI tools for legal review, finance research, policy analysis, procurement, consulting, compliance, or enterprise knowledge work: the model has “read” everything, yet somehow understands the wrong thing. Very modern. Very expensive.

The arXiv paper “From Similarity to Structure: Training-free LLM Context Compression with Hybrid Graph Priors” tackles this problem from a practical angle. Instead of training yet another compressor model, the authors propose a training-free, model-agnostic sentence selection framework. Its argument is simple but important: compression should not only keep sentences that are semantically similar to the query; it should preserve the structure of the document — topics, transitions, bridges, and local reasoning loops.

That distinction matters for business deployment. In real workflows, a compressed context is not a poetic summary. It is evidence passed into an AI system that may produce a memo, risk assessment, recommendation, or decision support output. Losing one bridge sentence can be enough to turn a careful analysis into confident nonsense. And confidence, as the enterprise AI market keeps demonstrating, is not a control mechanism.

Background — Context and prior art

Context compression exists because long-context LLMs have three persistent constraints.

First, long inputs are computationally costly. Transformer attention is not a charity; more tokens mean more compute, more latency, and usually more money. Second, longer context windows do not guarantee reliable use of evidence. Models can degrade when relevant information is placed in hard-to-attend regions of a long prompt. Third, enterprise documents are not flat bags of sentences. They have sections, transitions, definitions, qualifications, exceptions, and sometimes a regrettable number of appendices.

The paper positions itself against three broad families of prior work:

| Prior approach | What it tries to do | Practical limitation |

|---|---|---|

| Long-context architectures | Make the model accept more tokens | Larger capacity does not guarantee better evidence use |

| Learned compressors | Train a model to remove or transform redundant text | Adds training cost, domain adaptation burden, and opacity |

| Similarity-based extractive methods | Select sentences that look relevant or central | Often misses discourse bridges and cross-section reasoning |

Classic graph-based extractive summarization methods, such as TextRank-style sentence graphs, already showed that structure can guide selection. But many such methods rely mainly on sentence similarity: if two sentences are close in embedding space, connect them; then select central ones. That helps, but it is not enough for long documents.

A long report is not just a similarity map. It is also a sequence. A sentence may be important not because it is the most semantically representative, but because it connects one topic to another, introduces an exception, or closes a reasoning loop. Similarity alone sees “same topic.” Structure sees “this is where the argument turns.” The latter is usually where the money is.

Analysis or Implementation — What the paper does

The proposed method compresses a document by selecting a subset of sentences under a token budget. The selected sentences are then returned in their original order, making the compressed context readable and directly usable by downstream LLMs.

The pipeline has six main steps.

1. Split the document into sentences and embed them

The document is represented as a sentence sequence. Each sentence is encoded with a frozen embedding model, and embeddings are normalized for cosine similarity. The authors cap each sentence at 512 tokens during embedding computation to control cost and avoid pathological formatting cases.

In business language: every sentence becomes a node with a semantic fingerprint. No fine-tuning. No training stage. No “please wait six weeks while we build a domain compressor.”

2. Build a hybrid sentence graph

The key design choice is the graph. The method combines two edge types:

| Edge type | What it captures | Why it matters |

|---|---|---|

| Mutual $k$-nearest-neighbor semantic edges | Sentences that are mutually close in embedding space | Preserves topical relevance and semantic neighborhoods |

| Sequential edges | Sentences near each other in the original document | Preserves local discourse flow and narrative continuity |

The semantic graph uses mutual $k$-NN, meaning sentence $i$ connects to sentence $j$ only if each appears in the other’s nearest-neighbor list. This keeps the graph sparse and reduces noisy one-way links.

The sequential edges encode the old-fashioned idea that documents are written in order for a reason. Radical, I know.

The two signals are fused through a weighted edge score:

$$ \lambda_{ij} = \alpha \cdot w^{sem}{ij} + \beta \cdot w^{seq}{ij} $$

Here, $\alpha$ controls trust in semantic similarity, while $\beta$ controls trust in local document order. The paper’s experiments suggest that the best setting is not semantic-only or sequence-only, but a hybrid with a strong sequential component and semantic backbone.

3. Extract a topic skeleton through clustering

The method clusters sentence embeddings using MiniBatch K-Means. The number of clusters follows a square-root heuristic:

$$ K = \text{round}(\sqrt{N}) $$

where $N$ is the number of sentences.

Each cluster acts as a coarse topic region. A sentence receives a representativeness score based on how close it is to the centroid of its topic cluster. This helps prevent the compressed context from over-selecting scattered but individually relevant sentences while neglecting balanced topic coverage.

That is important in enterprise documents. A compliance memo, annual report, legal filing, or technical specification may have several necessary sections. A compressor that selects only the loudest sentences is not efficient. It is reckless with a smaller bill.

4. Score sentences using relevance and structural priors

The scoring function combines four signals:

| Signal | Technical meaning | What it protects in practice |

|---|---|---|

| Task relevance | Similarity to the query, or to the document centroid if no query exists | Keeps content aligned with the user’s purpose |

| Topic representativeness | Similarity to cluster centroid | Maintains topic coverage |

| Bridge centrality | Betweenness centrality in the hybrid graph | Preserves transition sentences and cross-section links |

| Cycle coverage | Whether the sentence participates in local graph cycles | Protects local reasoning loops and mutual reinforcement |

The composite score is:

$$ S(i) = \lambda_{task}S_{task}(i) + \lambda_{rep}S_{rep}(i) + \lambda_{bridge}S_{bridge}(i) + \lambda_{cycle}S_{cycle}(i) $$

The default weights are:

$$ (\lambda_{task}, \lambda_{rep}, \lambda_{bridge}, \lambda_{cycle}) = (0.45, 0.30, 0.20, 0.05) $$

This weighting is sensible. Relevance dominates, but structure is not treated as decorative garnish. Representativeness and bridge centrality together account for half as much as the task relevance term, enough to prevent a pure query-similarity filter from amputating the document’s connective tissue.

5. Select sentences under a token budget with redundancy suppression

The method greedily selects high-scoring sentences while respecting a token budget. By default, the paper uses a compression ratio of $\rho = 0.30$, meaning the compressed context is about 30% of the original token count.

It also applies non-maximum suppression: if a candidate sentence is too similar to one already selected, it is discarded. The default cosine similarity threshold is $\tau = 0.92$.

This part is less glamorous, but operationally important. Many compressors waste budget by selecting near-duplicates. In a constrained context window, redundancy is not harmless; it crowds out evidence.

6. Return selected sentences in original order

The final selected sentences are sorted by their original position in the document. This preserves readability and reduces the “evidence confetti” problem — where individually relevant snippets are thrown together in an order no human would voluntarily read.

For enterprise AI systems, this matters because compressed context is often inspected during debugging, audit, or human review. If a compressed input cannot be audited, it is not a control layer. It is just another black box wearing a smaller hat.

Findings — Results with visualization

The authors evaluate the method on four summarization benchmarks: CNN/DailyMail, GovReport, arXiv, and PubMed. These cover short-to-medium news documents and long-form government, scientific, and biomedical texts.

The baselines include classic extractive methods, supervised extractors, abstractive models, long-context architectures, and recent LLM-oriented summarization or compression approaches.

Performance pattern

The paper’s most important empirical pattern is not that the method wins everywhere by theatrical margins. It is more useful than that: the method is especially strong on long-document benchmarks, where structure matters more.

| Dataset | Document type | Main reported result | Business reading |

|---|---|---|---|

| CNN/DailyMail | News articles | Competitive ROUGE; best BERTScore and QAFactEval among compared methods | On short/news-style text, positional and rank-fusion baselines remain hard to beat, but structure helps faithfulness |

| GovReport | Long government reports | Best results across reported metrics | Structural compression helps when evidence is distributed across sections |

| arXiv | Scientific papers | Best results across reported metrics | Topic coverage and bridge preservation matter in technical argument chains |

| PubMed | Biomedical papers | Best ROUGE-1, ROUGE-L, and QAFactEval | Strong fit for factual, long-form, high-precision domains |

A simplified view of the reported headline results:

| Method family | Strength | Weakness exposed by the paper |

|---|---|---|

| Similarity-only extraction | Simple and transparent | Can miss connective or transitional evidence |

| Learned extractive models | Strong task performance | Require training and may be less plug-and-play |

| Abstractive models | Fluent summaries | Can introduce faithfulness and auditability risks |

| Long-context models | Accept larger inputs | Still expensive and not always reliable at using long context |

| Hybrid graph priors | Transparent structure-aware selection | Still depends on embedding quality and heuristic choices |

Ablation: the method is not one trick in a trench coat

The ablation studies remove individual components: sequential edges, topic representativeness, bridge centrality, cycle coverage, and redundancy suppression.

The pattern is clear: all components contribute, but the most important effects appear on long-document datasets.

| Removed component | What drops conceptually | Interpretation |

|---|---|---|

| Sequential edges | Local order and discourse flow | Semantic similarity alone misses how arguments unfold |

| Topic representativeness | Balanced coverage | The compressor becomes more vulnerable to over-focusing on narrow relevance |

| Bridge centrality | Cross-topic connectors | Transition sentences and evidence links are easier to lose |

| Cycle coverage | Local closed-loop reasoning | Smaller but consistent effect; useful for preserving local coherence |

| Redundancy suppression | Budget efficiency | Near-duplicates consume tokens without adding much evidence |

The strongest lesson: the gains are not coming from a mysterious neural component. They come from respecting the fact that documents have shape.

Fusion weight: neither pure similarity nor pure sequence wins

The paper also studies the balance between semantic similarity and sequential structure. The strongest performance appears at an intermediate hybrid setting, rather than at either extreme.

| Graph design | What happens | Practical implication |

|---|---|---|

| Semantic-only | Better topical matching, weaker discourse continuity | Useful but brittle for long structured documents |

| Sequence-only | Preserves local order, weaker global relevance | Too close to smart truncation |

| Hybrid graph | Best balance in reported experiments | Enterprise compressors should model both meaning and document flow |

This is the paper’s central business lesson: retrieval-style similarity is necessary, but not sufficient. If your AI workflow only asks “which chunks are closest to the query?”, it may retrieve the right ingredients and still lose the recipe.

Implications — Next steps and significance

The paper is technically about context compression, but the broader implications are larger. It points toward a more mature design philosophy for LLM systems: structure-aware preprocessing.

Many enterprise AI tools still treat documents as piles of chunks. They split PDFs into segments, embed them, retrieve the nearest matches, and pass them to an LLM. That pipeline is easy to build and easy to demo. It is also easy to break when documents are long, layered, or argumentative.

A structure-aware compressor can become an intermediate control layer between raw retrieval and generation.

| Enterprise use case | Why structure-aware compression matters |

|---|---|

| Legal case preparation | Preserves definitions, exceptions, evidence links, and issue transitions |

| Financial research | Keeps assumptions, risk factors, and cross-section dependencies together |

| Compliance review | Maintains policy hierarchy and exception logic |

| Scientific literature analysis | Protects method-result-claim chains across distant sections |

| Internal knowledge assistants | Reduces irrelevant context while keeping operational logic intact |

| Board and management reporting | Compresses long documents without flattening their reasoning structure |

The method is also attractive because it is training-free. That lowers adoption friction. A company does not need to collect labeled compression data, fine-tune a model, or maintain a specialized compressor for every department. It can insert a graph-based preprocessor into existing RAG or document-analysis workflows.

But there are caveats.

First, the method depends on embedding quality. If embeddings fail to capture domain-specific meaning, the graph inherits that weakness. This matters in legal, medical, financial, and engineering contexts where a seemingly small phrase can change everything.

Second, the method selects sentences, not rewritten summaries. That is good for auditability but may be less compact than abstractive compression. In workflows where every token is expensive, sentence-level extraction may still leave savings on the table.

Third, graph priors are interpretable, but they are still heuristics. The default weights performed robustly in the paper, yet enterprise deployments should evaluate them against domain-specific tasks. A procurement contract and a clinical trial report do not fail in exactly the same way. Sadly, reality continues to resist standardization.

Fourth, structural preservation is not the same as factual correctness in the final LLM output. Compression can improve the input; it cannot absolve the downstream model from hallucination, weak reasoning, or bad instruction design. This method should be part of an assurance pipeline, not mistaken for one.

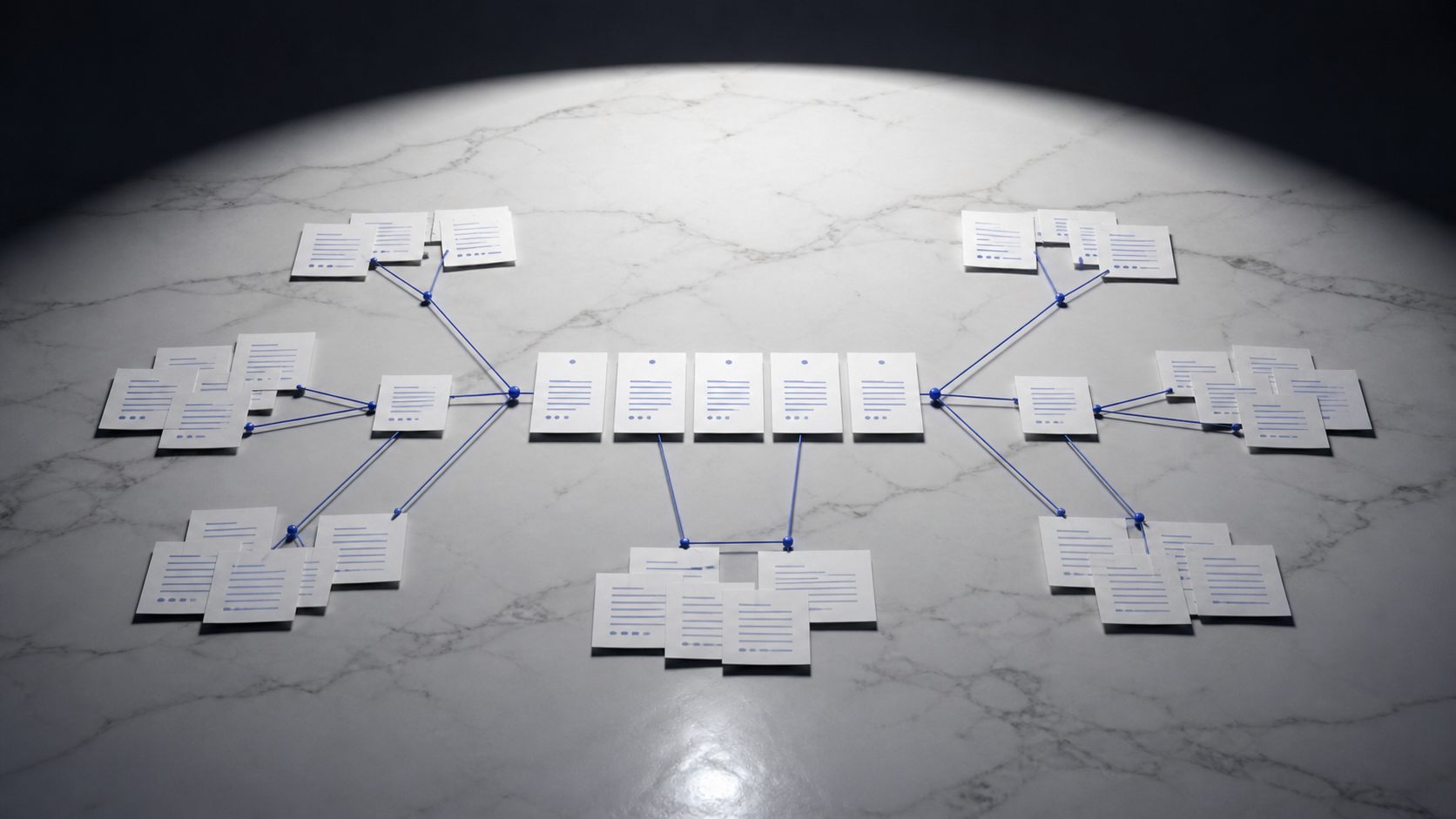

A practical deployment architecture might look like this:

| Layer | Role | Key control question |

|---|---|---|

| Document ingestion | Parse and clean source documents | Did we preserve section structure and metadata? |

| Sentence graph compression | Select relevant, representative, and structurally important sentences | Did we keep bridges, exceptions, and topic coverage? |

| LLM reasoning layer | Generate answer, memo, or decision support output | Does the output cite or rely on preserved evidence? |

| Human review layer | Validate high-risk conclusions | Are assumptions and missing evidence visible? |

| Monitoring layer | Track failures by domain and document type | Which structures are consistently lost? |

This is where the paper becomes useful for operators. It gives a concrete way to reduce context length without reducing the document into a bag of semantically similar snippets. For AI systems that support real work, that is not academic elegance. It is cost control, latency control, and failure control.

Conclusion — Wrap-up and tagline

The paper’s contribution is not that it invents graph-based summarization from nowhere. It does something more operationally relevant: it reframes context compression as a structural preservation problem.

That is the right framing. Enterprise AI does not merely need shorter prompts. It needs prompts that retain the right evidence, the right transitions, and the right shape of reasoning. The difference between similarity and structure is the difference between finding related sentences and preserving an argument.

The proposed hybrid graph approach is not a silver bullet. It will still depend on embeddings, sentence segmentation, domain evaluation, and careful integration into downstream workflows. But it is a useful antidote to a lazy assumption spreading through AI product design: that bigger context windows will save us from having to understand documents.

They will not. Bigger windows are storage. Structure is intelligence.

Cognaptus: Automate the Present, Incubate the Future.