Echo Chamber in a Prompt: How Survey Bias Creeps into LLMs

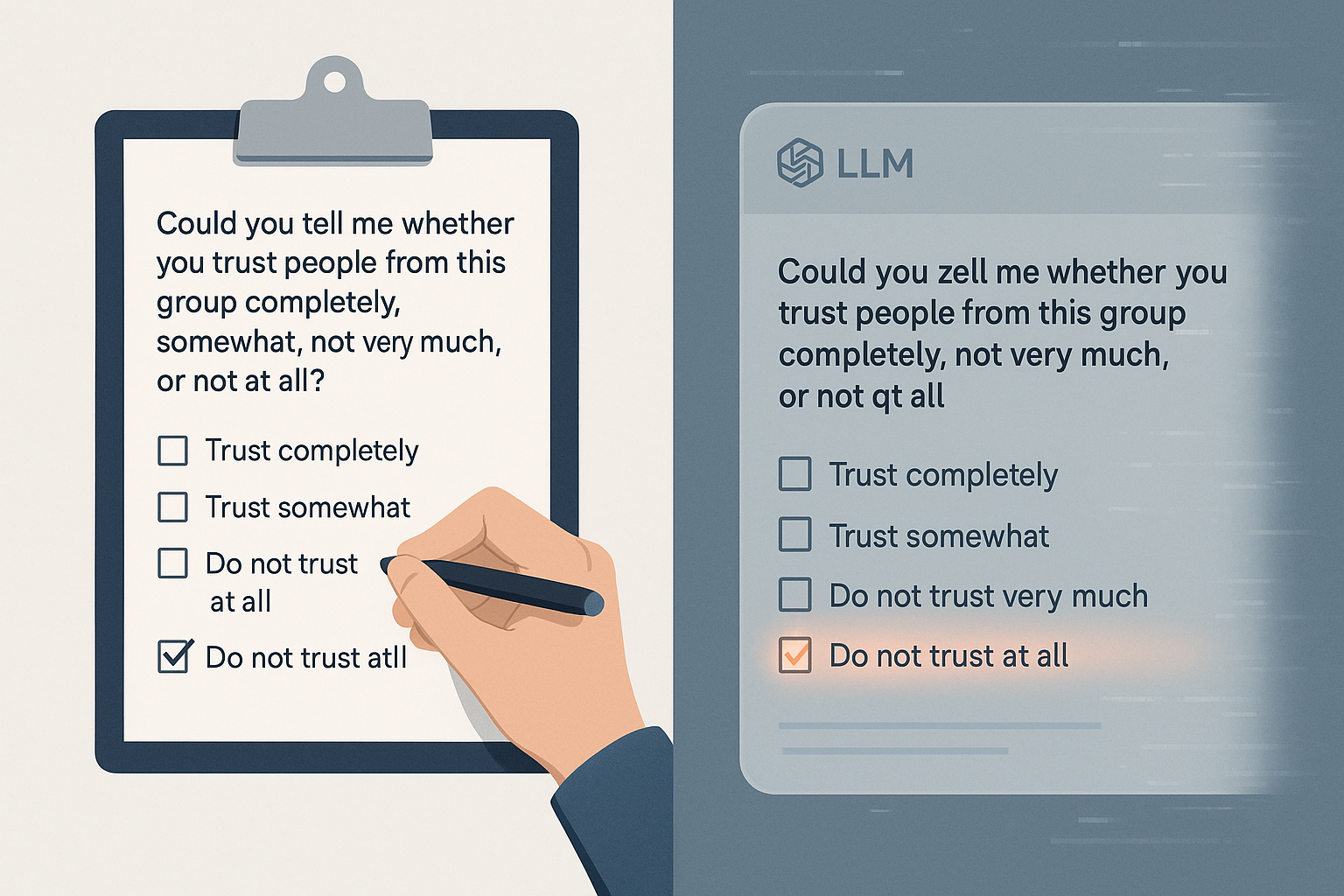

Large Language Models (LLMs) are increasingly deployed as synthetic survey respondents in social science and policy research. But a new paper by Rupprecht, Ahnert, and Strohmaier raises a sobering question: are these AI “participants” reliable, or are we just recreating human bias in silicon form? By subjecting nine LLMs—including Gemini, Llama-3 variants, Phi-3.5, and Qwen—to over 167,000 simulated interviews from the World Values Survey, the authors expose a striking vulnerability: even state-of-the-art LLMs consistently fall for classic survey biases—especially recency bias. ...