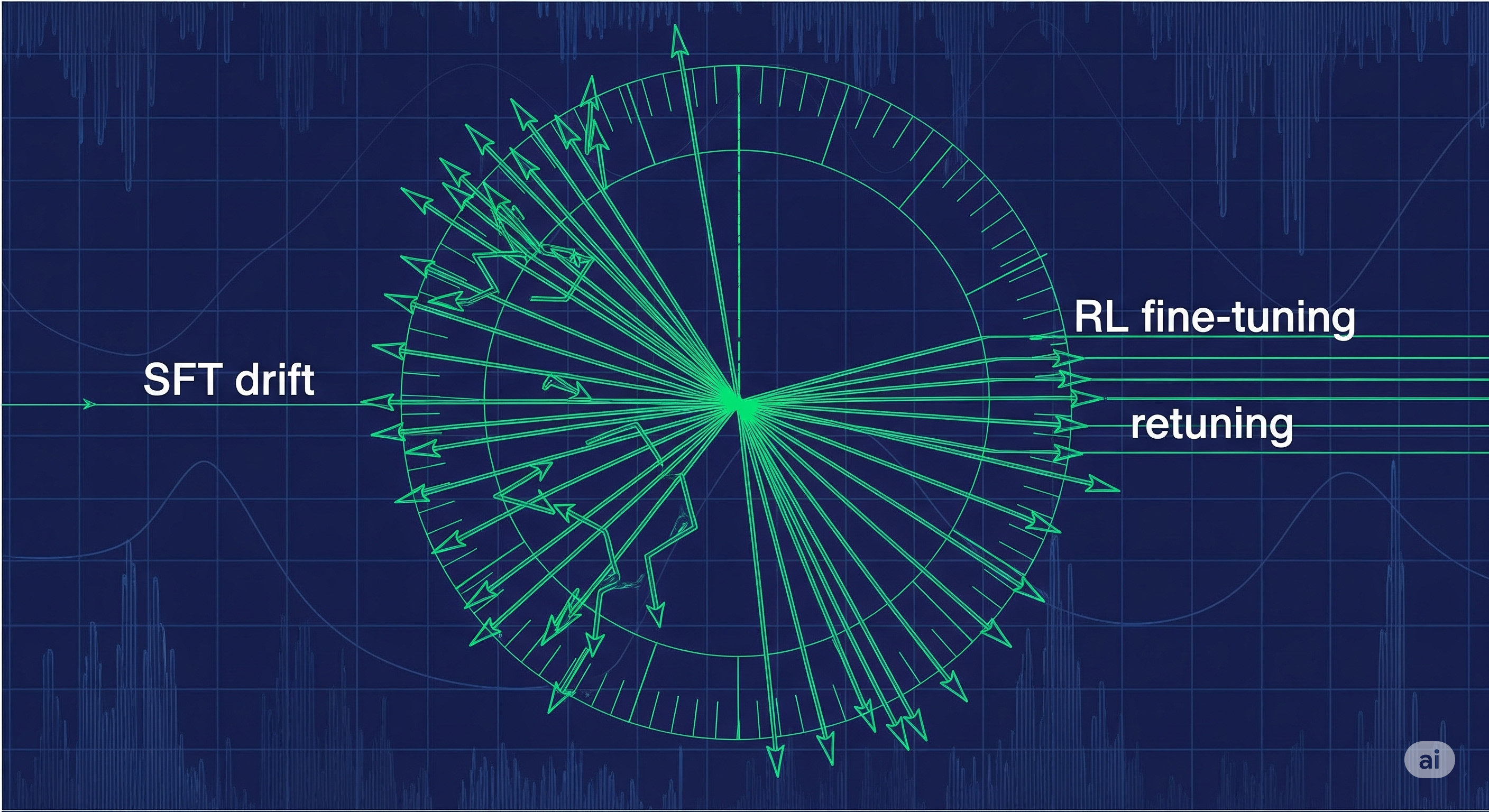

Spin Doctors: Why RL Fine‑Tuning Mostly Rotates, Not Reinvents

The short of it Reinforcement‑learning fine‑tuning (RL‑FT) often looks like magic: you SFT a model until it aces your dataset, panic when it forgets math or coding edge cases, then run PPO and—voilà—generalization returns. A new paper argues the mechanism isn’t mystical at all: RL‑FT mostly rotates a model’s learned directions back toward broadly useful features, rather than unlocking novel capabilities. In practical terms, cheap surgical resets (shallow layers or top‑rank components) can recover much of that OOD skill without running an expensive RL pipeline. ...