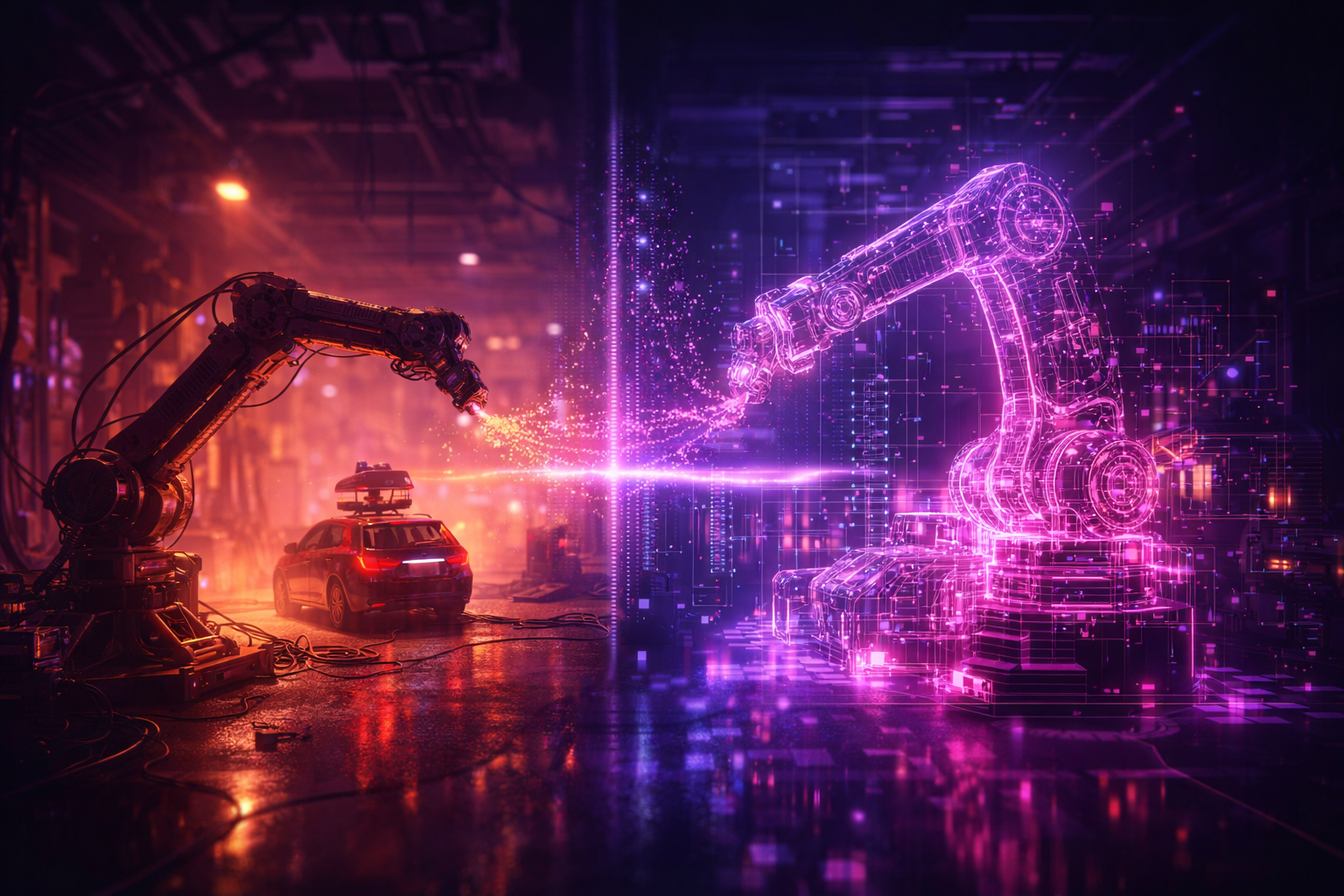

Small Models, Big Skills: When Agent Frameworks Meet Industrial Reality

Opening — Why this matters now In the age of API-driven AI, it is easy to assume that intelligence is rented by the token. Call a proprietary model, route a few tools, and let the “agent” handle the rest. Until compliance says no. In regulated industries—finance, insurance, defense—data cannot casually traverse external APIs. Budgets cannot absorb unpredictable GPU-hours. And latency cannot spike because a model decided to “think harder.” ...