AI Evaluation, Monitoring, and Incident Response for Production Systems

How to evaluate, monitor, and respond to failures in production AI systems so quality, safety, and governance remain active after launch.

How to evaluate, monitor, and respond to failures in production AI systems so quality, safety, and governance remain active after launch.

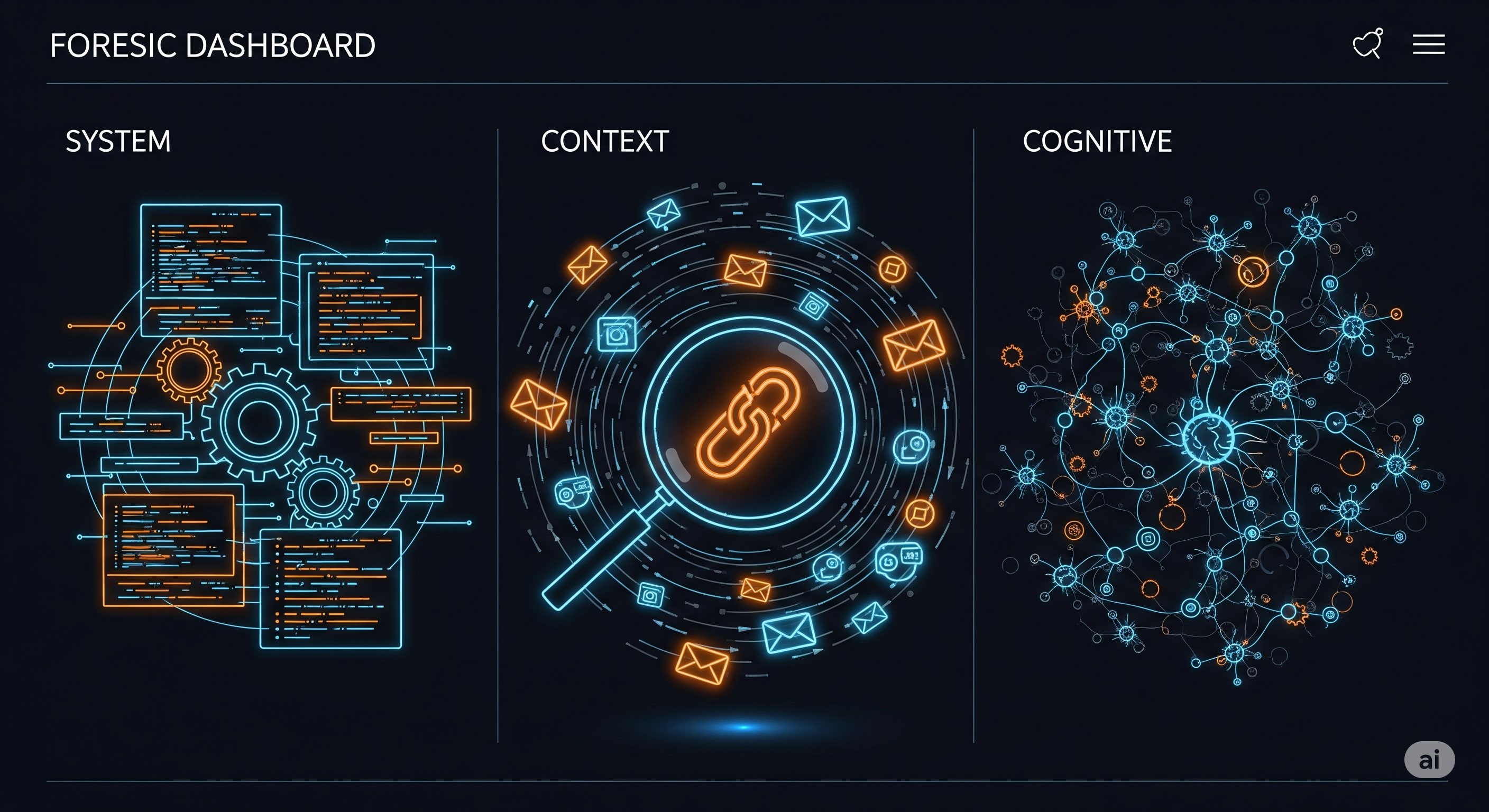

TL;DR As AI agents spread into real workflows, incidents are inevitable—from prompt-injected data leaks to misfired tool actions. A recent framework by Ezell, Roberts‑Gaal, and Chan offers a clean way to reason about why failures happen and what evidence you need to prove it. The trick is to stop treating incidents as one-off mysteries and start running a disciplined, forensic pipeline: capture the right artifacts, map causes across system, context, and cognition, then ship targeted fixes. ...