The Butterfly Defect: Diagnosing LLM Failures in Tool-Agent Chains

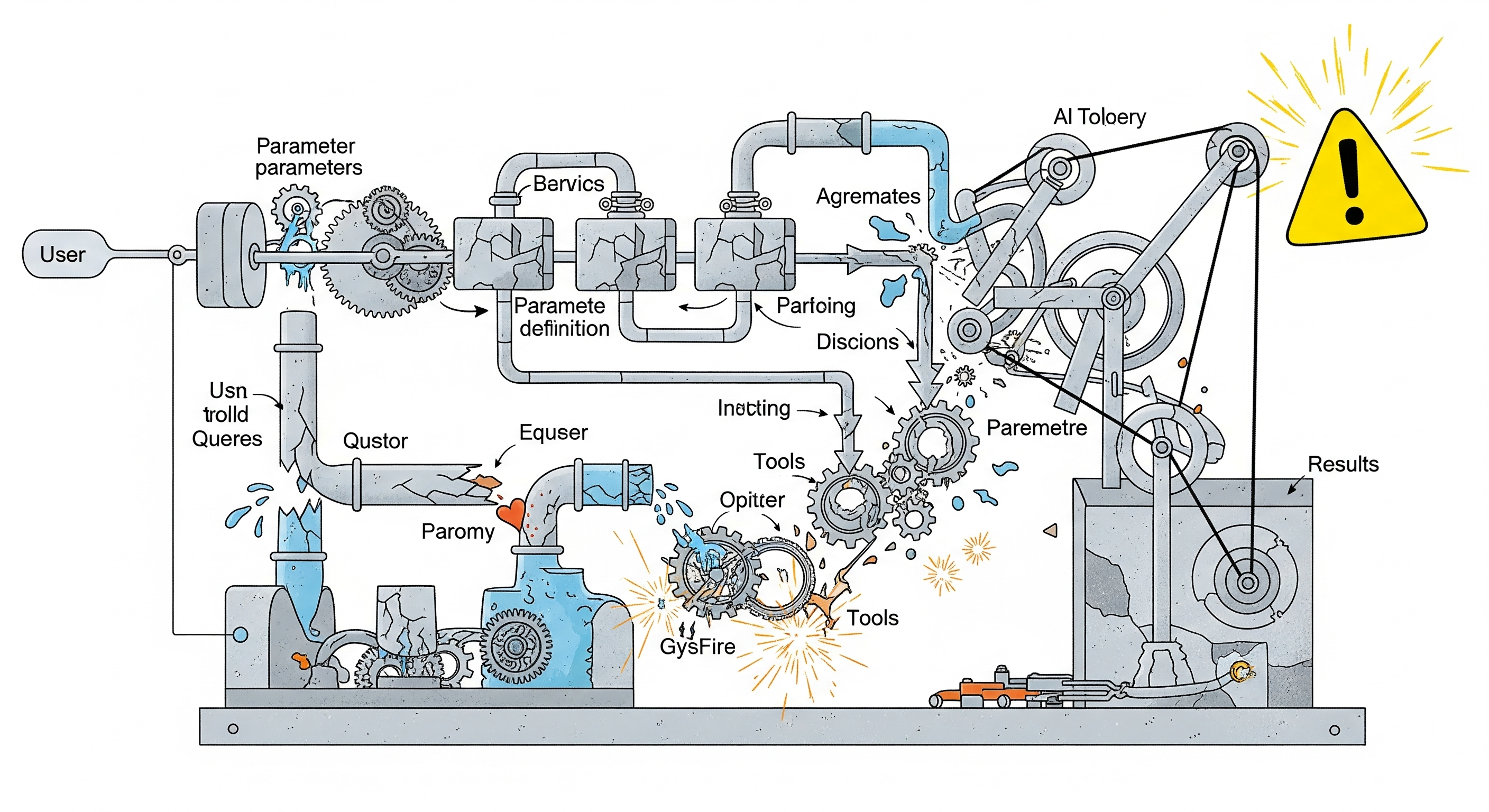

As LLM-powered agents become the backbone of many automation systems, their ability to reliably invoke external tools is now under the spotlight. Despite impressive multi-step reasoning, many such agents crumble in practice—not because they can’t plan, but because they can’t parse. One wrong parameter, one mismatched data type, and the whole chain collapses. A new paper titled “Butterfly Effects in Toolchains” offers the first systematic taxonomy of these failures, exposing how parameter-filling errors propagate through tool-invoking agents. The findings aren’t just technical quirks—they speak to deep flaws in how current LLM systems are evaluated, built, and safeguarded. ...