When AI Knows the Map but Gets Lost on the Journey

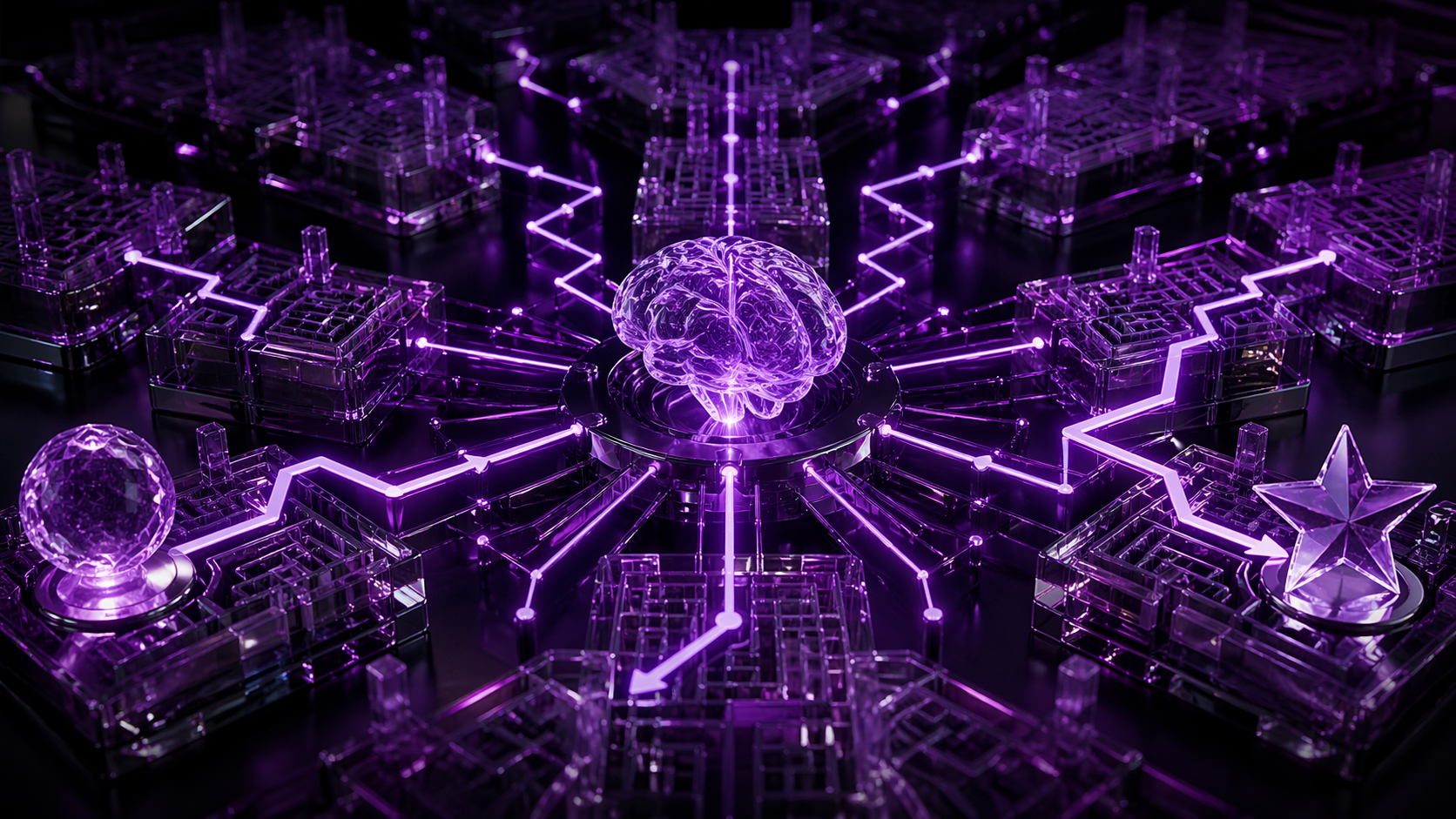

Opening — Why this matters now Everyone wants AI agents that can plan, reason, and execute multi-step work. Fewer people ask the impolite question: Can they keep doing it when the task gets longer? A new ICLR 2026 paper studies this with unusual discipline. Instead of another benchmark made of messy internet text and leaderboard optimism, the authors use shortest-path planning in synthetic maps to isolate one brutal truth: many models can transfer skills to new environments, yet still collapse when the sequence of decisions extends too far. ...