Longer Yet Dumber: Why LLMs Fail at Catching Their Own Coding Mistakes

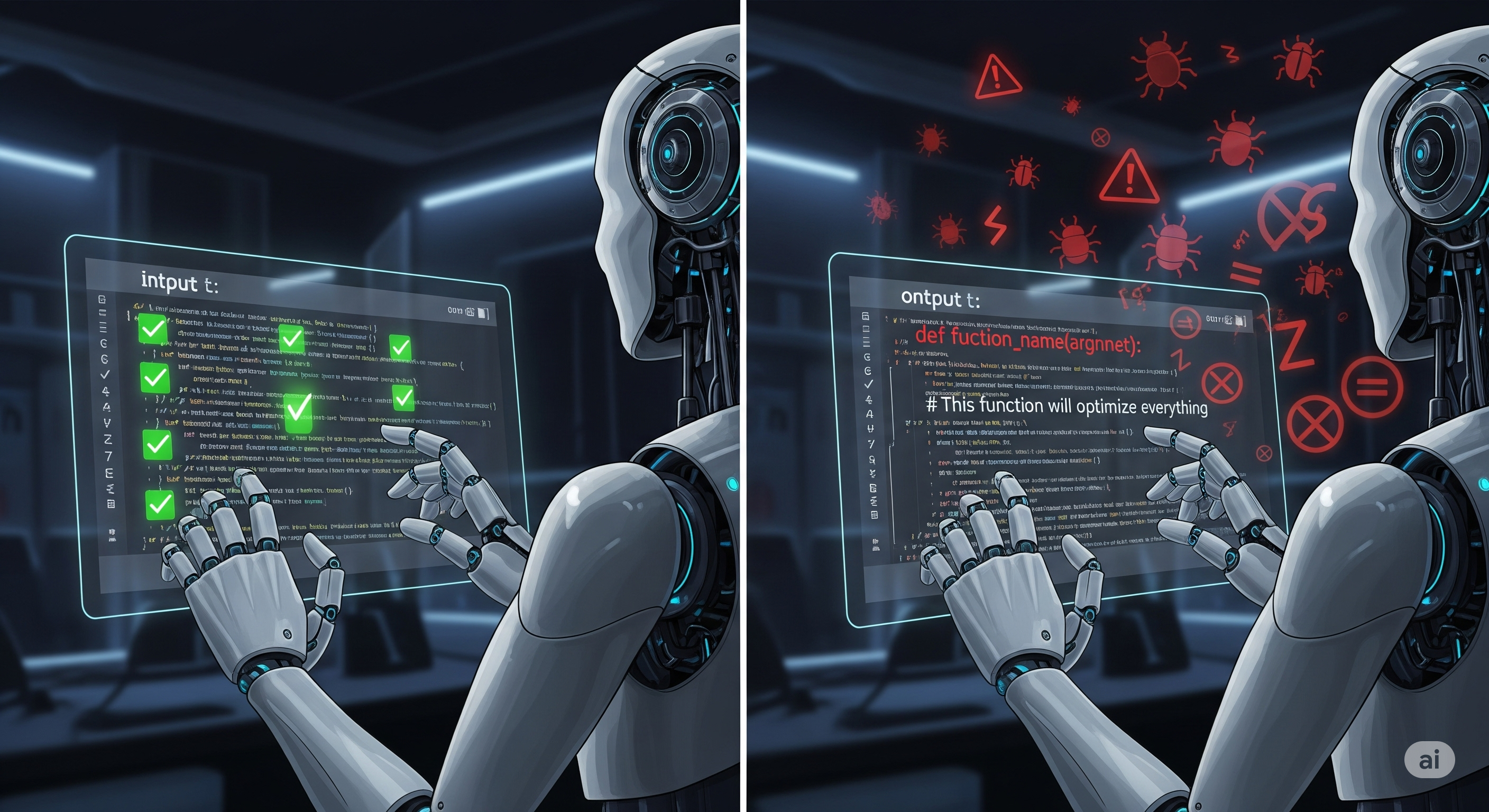

When a junior developer misunderstands your instructions, they might still write code that compiles and runs—but does the wrong thing. This is exactly what large language models (LLMs) do when faced with faulty premises. The latest paper, Refining Critical Thinking in LLM Code Generation, unveils FPBench, a benchmark that probes an overlooked blind spot: whether AI models can detect flawed assumptions before they generate a single line of code. Spoiler: they usually can’t. ...