The Clock Inside the Machine: How LLMs Construct Their Own Time

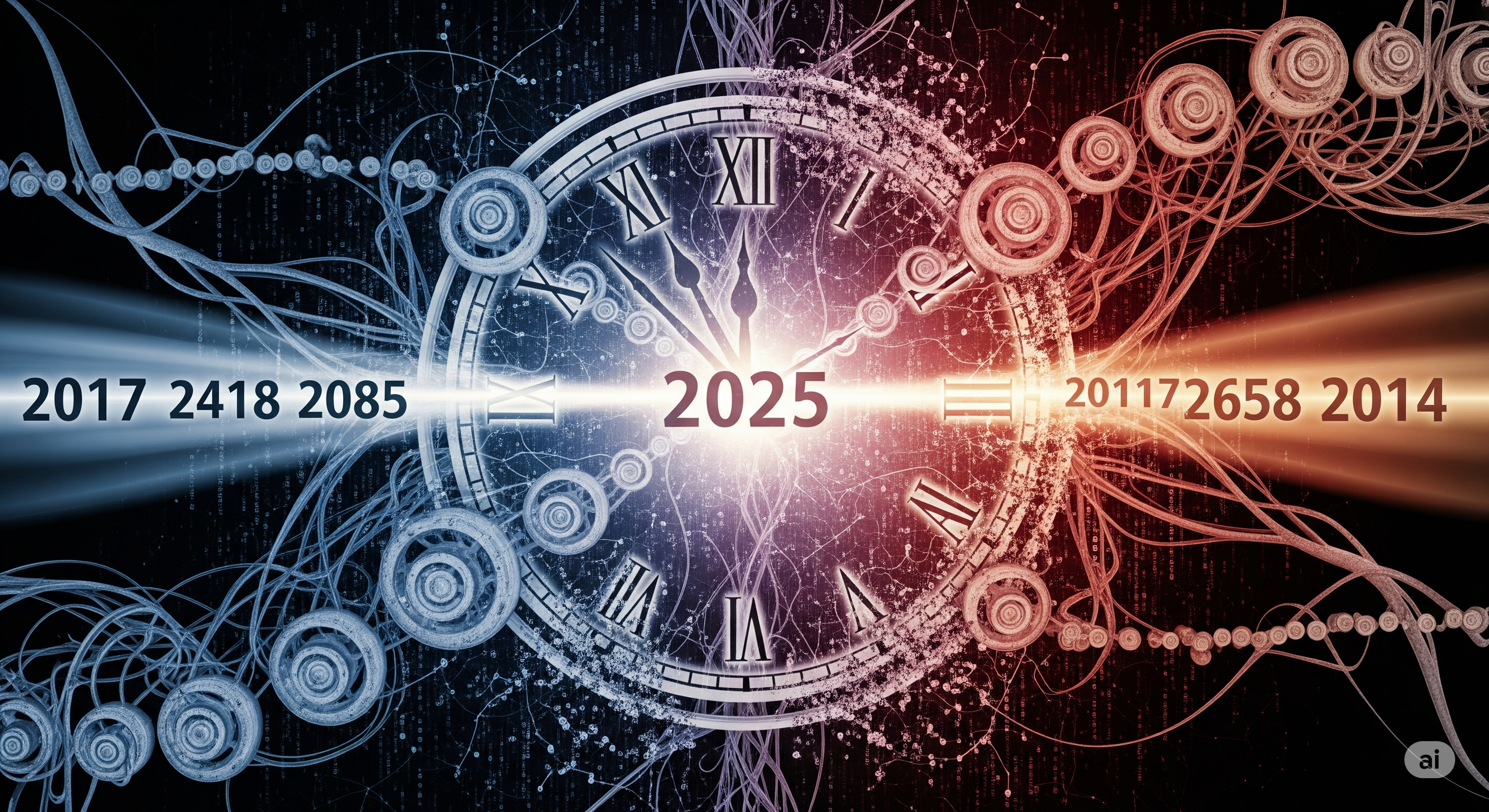

What if your AI model isn’t just answering questions, but living in its own version of time? A new paper titled The Other Mind makes a bold claim: large language models (LLMs) exhibit temporal cognition that mirrors how humans perceive time — not through raw numbers, but as a subjective, compressed mental landscape. Using a cognitive science task known as similarity judgment, the researchers asked 12 LLMs, from GPT-4o to Qwen2.5-72B, to rate how similar two years (like 1972 and 1992) felt. The results were startling: instead of linear comparisons, larger models automatically centered their judgment around a reference year — typically close to 2025 — and applied a logarithmic perception of time. In other words, just like us, they feel that 2020 and 2030 are more similar than 1520 and 1530. ...