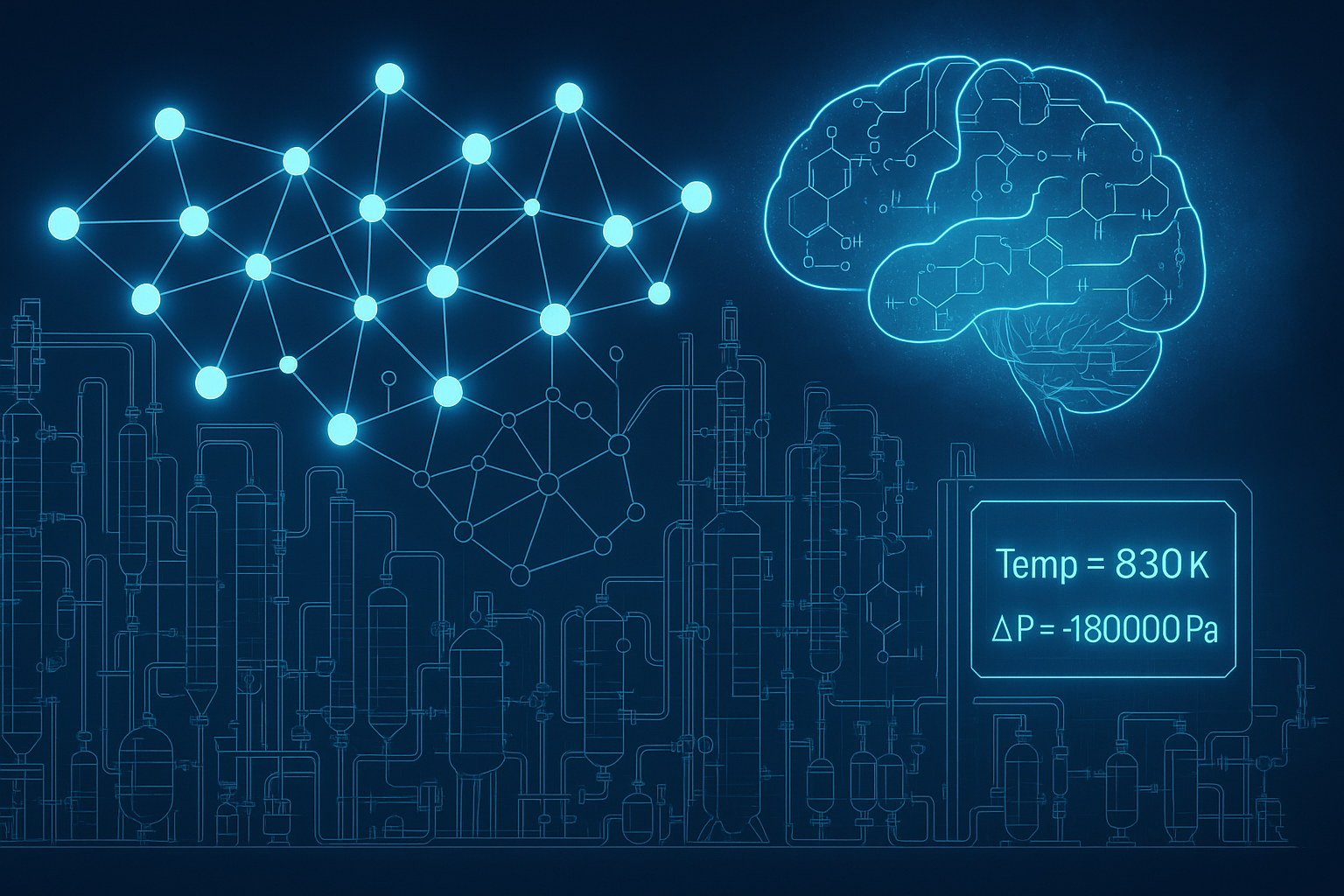

Catalysts of Thought: How LLM Agents are Reinventing Chemical Process Optimization

In the world of chemical engineering, optimization is both a science and an art. But when operating conditions are ambiguous or constraints are missing, even the most robust solvers stumble. Enter the next-gen solution: a team of LLM agents that not only understand the problem but define it. When Optimization Meets Ambiguity Traditional solvers like IPOPT or grid search work well—if you already know the boundaries. In real-world industrial setups, however, engineers often have to guess the feasible ranges based on heuristics and fragmented documentation. This paper from Carnegie Mellon University breaks the mold by deploying AutoGen-based multi-agent LLMs that generate constraints, propose solutions, validate them, and run simulations—all with minimal human input. ...