Opening — Why this matters now

The first wave of enterprise AI adoption was obsessed with model choice. Which model is smarter? Which model writes better? Which model can reason, code, browse, call tools, summarize contracts, and politely pretend it enjoys quarterly planning?

That was the easy part. The less glamorous question is now becoming more expensive: how do we serve all these model calls reliably, cheaply, and at scale?

Zijie Zhou’s position paper, LLM Serving Needs Mathematical Optimization and Algorithmic Foundations, Not Just Heuristics, argues that LLM inference serving has outgrown the era of generic heuristics.1 The paper’s core point is not that existing systems are badly engineered. Quite the opposite: systems such as vLLM and SGLang have delivered major serving improvements through continuous batching, paged attention, prefill-decode disaggregation, and other architectural innovations. The problem is that many of the control decisions inside these systems still rely on classical, general-purpose policies: round-robin routing, join-shortest-queue, first-come-first-served scheduling, and least-recently-used cache eviction.

Those policies are simple. They are deployable. They are also, in the paper’s view, structurally under-informed.

LLM serving is not ordinary web serving with a GPU costume. Requests have two distinct phases. The prefill phase is compute-heavy; the decode phase is memory-bandwidth-heavy. KV cache memory grows token by token. Output length is unknown at admission time. Continuous batching means requests enter and exit dynamically. In MoE models, expert routing can create straggler GPUs. In multimodal systems, cached objects differ wildly in size and recomputation cost.

A normal distributed-system heuristic sees a queue.

An LLM serving system sees a queue where the jobs grow while being processed, their future size is unknown, their migration is expensive, their memory footprint matters as much as compute, and synchronization barriers punish imbalance. Naturally, the industry’s first instinct was to use round-robin. We are a practical species, not always a wise one.

The paper is a position paper, so it does not present one grand new algorithm and a leaderboard victory lap. Instead, it maps the algorithmic landscape of LLM serving and argues that the next efficiency frontier will come from formal optimization, queueing theory, online algorithms, and scheduling theory adapted to LLM-specific structure.

For business readers, the message is blunt: AI cost control is moving upstream from invoice monitoring to workload mathematics.

Background — Context and prior art

LLM inference has become production infrastructure. When a user asks a chatbot a question, calls an AI coding assistant, uploads an image to a multimodal model, or runs an agentic workflow, the visible response is only the final surface. Underneath, the serving layer must allocate GPU compute, GPU memory, KV cache, network communication, request queues, worker pools, and cache capacity.

The paper identifies several serving innovations that already changed the economics of inference:

| Serving innovation | What it improved | Why it created new control problems |

|---|---|---|

| Continuous batching | Higher GPU utilization by allowing requests to join and leave batches dynamically | The scheduler must decide which waiting requests to admit as capacity opens |

| Paged attention | Better KV cache memory management through block-based allocation | Cache pressure becomes a first-class operational constraint |

| Prefill-decode disaggregation | Specialized worker pools for compute-heavy prefill and memory-heavy decode | Operators must allocate capacity between phases under changing workloads |

| Mixture-of-experts models | Larger model capacity without proportional compute growth | Token routing and expert placement can create GPU stragglers |

| Multimodal serving | Support for images, video, audio, and text | Embedding caches must handle objects with different sizes and recomputation costs |

The prior art, as the paper describes it, is not empty. There are strong systems papers, production frameworks, and emerging optimization work. But the author argues that the algorithmic core of serving has lagged behind architectural progress. Many practical systems still make decisions using policies inherited from classical distributed computing.

That inheritance is understandable. Round-robin, FCFS, and LRU are not stupid policies. They are robust, simple, and easy to reason about operationally. They also require little information. A router does not need to understand output length uncertainty. A cache does not need to estimate recomputation cost. A scheduler does not need a formal model of growing memory demand.

The drawback is exactly the same: these policies ignore information that matters.

The paper’s conceptual move is to reframe LLM serving as a family of structured optimization problems:

| Decision area | Common heuristic | LLM-specific structure being ignored | Optimization lens |

|---|---|---|---|

| MoE expert load balancing | Auxiliary losses, routing noise, capacity caps, reactive bias updates | Expert popularity creates straggler GPUs during synchronized communication | Constrained assignment / linear programming |

| Request routing across decode workers | Round-robin, random, power-of-two choices, cache-aware routing | KV cache grows, output length is unknown, assignment is sticky | Online integer optimization |

| Worker-level scheduling | First-come-first-served | Shorter requests and memory-light requests may improve average latency and throughput | Scheduling with memory constraints and uncertain job length |

| Capacity planning | Reactive autoscaling from queue depth or utilization | A system can be compute-stable but memory-unstable | Queueing theory with compute and KV cache constraints |

| Multimodal cache eviction | LRU | Cached objects have different sizes and miss costs | Cost-aware online caching |

This is the paper’s central claim: LLM serving has enough distinctive structure that generic policies leave money, latency, reliability, and energy efficiency on the table.

That does not mean every production system should run an LP solver in the hot path tomorrow morning. The paper is more subtle. Formal optimization can matter even when the final deployed policy is a fast heuristic. The point of theory is often to reveal which constraints bind, which variables matter, and which simplified rule is worth deploying.

This is where the paper uses airline revenue management as historical precedent. Airlines did not become profitable by solving a massive linear program for every passenger at the booking screen. The LP revealed bid prices: the marginal value of seats on flight legs. Those shadow prices became fast accept/reject rules. The mathematics did not replace operations. It disciplined operations.

LLM serving may need the same kind of discipline.

Analysis or Implementation — What the paper does

Because this is a conceptual position paper, “implementation” means the paper’s analytical architecture: it surveys the decision problems inside LLM serving, explains why existing heuristics are inadequate, reviews emerging optimization-based approaches, and answers common objections.

The paper’s argument has four layers.

1. LLM serving is structurally different from classical serving

The paper emphasizes two technical facts that matter for almost every downstream decision.

First, inference has phase asymmetry. Prefill processes the prompt and is compute-bound. Decode generates tokens sequentially and is memory-bandwidth-bound. Optimizing one phase does not automatically optimize the other.

Second, KV cache memory grows during generation. A request’s memory footprint is not fixed at arrival. It expands as new tokens are produced, and final output length is unknown until the request ends.

That single detail corrupts many comfortable assumptions from classical scheduling and load balancing. Jobs are not fixed-size objects. They are growing objects. The system must make admission and routing decisions before knowing the final memory burden.

A simplified way to state the serving problem is:

$$ \text{Good serving} \neq \max(\text{GPU utilization}) $$

A more realistic objective is closer to:

$$ \min {\text{latency},\ \text{idle time},\ \text{cache misses},\ \text{energy cost},\ \text{SLA violations}} $$

subject to compute, memory, synchronization, batching, and fairness constraints.

That is not a vibe. That is an optimization problem.

2. The paper maps where heuristics are currently doing too much work

The author walks through four major bottleneck areas.

MoE expert routing and load balancing. In expert-parallel MoE deployment, tokens are routed to expert networks distributed across GPUs. If too many tokens concentrate on experts hosted by a few GPUs, those GPUs become stragglers. Other GPUs wait at synchronization barriers. The paper argues that inference-time expert routing is a constrained assignment problem, yet it is often handled indirectly through training-time auxiliary losses or reactive balancing heuristics.

Request routing to decode workers. In data-parallel decoding with expert-parallel internals, each worker has its own KV cache state. Once a request is assigned, moving it is expensive because the KV cache must move too. Classical load balancing policies do not fully capture unknown output length, predictable KV growth, sticky assignment, and synchronization barriers.

Scheduling and capacity planning inside workers. Continuous batching lets requests enter and leave dynamically. That raises admission questions: when a slot opens, which request should enter? FCFS is simple but ignores request characteristics. The related capacity question is even more business-critical: how many workers are needed to keep the system stable under a workload distribution? The paper argues that queueing analysis can help operators plan capacity before discovering instability during production traffic.

Caching and eviction. In multimodal inference, embeddings for images or video may be cached. LRU eviction ignores object size and recomputation cost. Evicting a large, expensive-to-recompute video embedding just because it was less recently used than a cheap thumbnail can be operationally silly, in the technical sense of “please enjoy your GPU bill.”

The caching example can be summarized with a simple score inspired by the paper’s discussion of Least Expected Cost:

$$ \text{keep_score}_i = \frac{\text{miss cost}_i}{\text{cache size}_i} \times \widehat{P}(\text{reuse}_i) $$

A lower-scoring item is a better eviction candidate. This is not merely a hit-rate mindset. It is a cost-minimization mindset.

3. The paper argues theory has practical functions, not decorative value

The author identifies four benefits of formal methods.

| Benefit of theory | Practical meaning for operators | What heuristics struggle to provide |

|---|---|---|

| Worst-case guarantees | More confidence under unusual workload spikes or distribution shifts | Benchmark-specific comfort |

| Fundamental limits | Better capacity planning before deployment | Reactive discovery of instability |

| Algorithmic structure | Clearer engineering priorities and approximations | Trial-and-error tuning |

| Optimality baselines | A way to know whether further optimization is worth it | Endless micro-optimization with no ceiling |

This is an important part of the paper because it prevents a common misunderstanding. The author is not saying every practical heuristic is useless. The claim is that heuristics should be informed by the problem’s structure, not inherited lazily from older systems.

In other words: keep heuristics if needed, but stop pretending all heuristics are equally innocent.

4. The paper reviews early examples of optimization already entering LLM serving

The paper highlights several examples from recent work:

| Example discussed in the paper | Optimization idea | Practical lesson |

|---|---|---|

| DeepSeek’s LP-based MoE load balancing | Use linear programming to redistribute token workload across redundant expert replicas | Direct optimization can make objectives and constraints explicit |

| Online load balancing for decode workers | Use short-horizon forecasts and integer optimization to reduce future imbalance | Accurate full output-length prediction may be unnecessary; short-term completion forecasts can be enough |

| Queueing and scheduling models for workers | Derive stability conditions and scheduling benchmarks under KV cache constraints | Capacity planning can become proactive rather than purely reactive |

| Cost-aware multimodal caching | Evict based on expected recomputation cost, size, and reuse probability | Hit rate alone is not the right objective when miss costs vary |

These examples matter because they move the paper beyond a generic “theory is good” sermon. The author is showing that the formulation-to-policy pipeline is already beginning.

Still, the distinction must be kept clean: the paper itself is mainly a synthesis and position argument. It points to emerging results; it does not itself prove all those results anew or run a comprehensive production benchmark across serving frameworks.

That distinction is important. Otherwise, we are just doing the usual AI-industry magic trick: turning a thoughtful paper into a vendor slide with three arrows and a fake metric.

Findings — Results with visualization

The paper’s “findings” are best understood as structured conclusions rather than experimental results. It does not claim, “our new system beats baseline X by Y% across benchmark Z.” Instead, it claims that the serving layer contains decision problems where formal optimization is increasingly necessary.

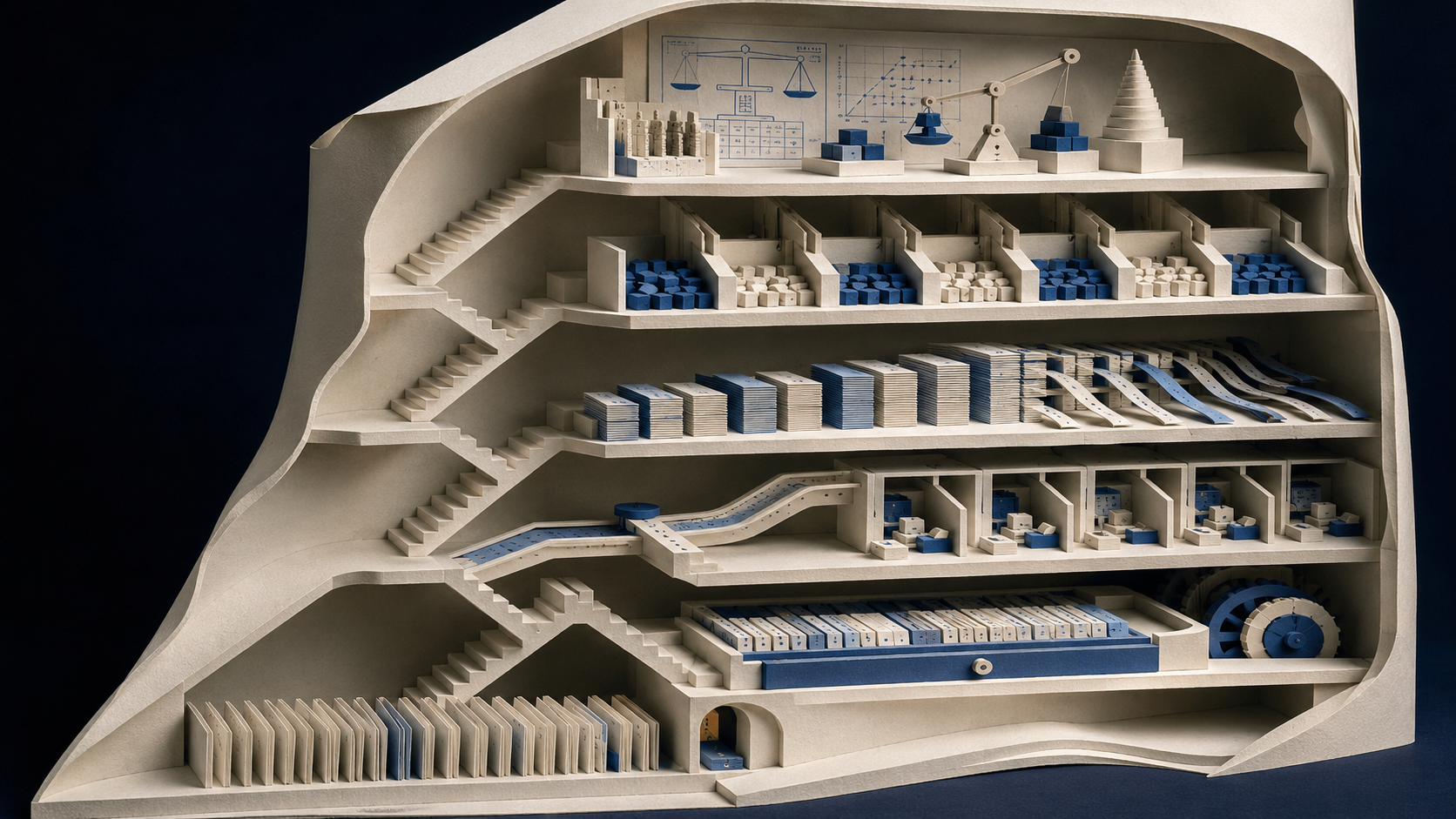

Finding 1: LLM serving is a portfolio of optimization problems

The paper’s most useful contribution for practitioners is the map of hidden decision points.

Incoming AI workload

|

v

Request routing ---> Which worker receives the request?

|

v

Scheduling ---> Which request enters the active batch?

|

v

KV cache control ---> How is growing memory handled?

|

v

MoE routing ---> Which experts process which tokens?

|

v

Caching ---> Which embeddings or prefixes stay in memory?

|

v

Capacity planning ---> How many workers are needed before traffic arrives?

Each layer has a local policy. Local policies interact. A routing decision changes KV cache distribution. KV cache distribution affects decode load. Decode imbalance creates synchronization idle time. Cache eviction affects TTFT. Capacity planning determines whether the whole system remains stable.

The practical finding is that serving performance is not one knob. It is a chain of coupled decisions.

Finding 2: “Good enough” heuristics can hide compounding costs

The paper fairly acknowledges the counterargument: existing heuristics do work in production. If they did not, the systems would not be serving millions or billions of requests.

But “works” is not the same as “efficient under workload shifts.” A heuristic can be acceptable under one traffic mix and fragile under another. A queue policy validated on chat traces may behave differently under agentic workloads where one user request triggers multiple tool calls, retrieval calls, model calls, pauses, branches, and resumptions.

The business translation is simple:

| Operational symptom | Possible algorithmic cause | Business consequence |

|---|---|---|

| GPUs appear utilized but latency is unstable | Synchronization barriers and load imbalance | SLA volatility |

| Autoscaling reacts too late | Capacity model ignores provisioning delay or KV memory pressure | Costly overprovisioning or degraded service |

| Cache hit rate looks fine but cost remains high | Cache policy ignores heterogeneous miss costs | Hidden inference waste |

| Short requests wait behind long generations | FCFS ignores job-length structure | Poor user experience for simple tasks |

| Agentic workflows become unpredictable | Existing models assume simple request lifecycles | Harder enterprise rollout and budgeting |

This is where the paper becomes ROI-relevant. Infrastructure inefficiency is not always visible as a dramatic outage. Often it appears as a slow leak: unnecessary GPU capacity, inflated latency buffers, conservative overprovisioning, and vague “AI is expensive” complaints from finance.

Very sophisticated, very modern, very spreadsheet-shaped pain.

Finding 3: Formal optimization can improve heuristics without replacing systems engineering

The strongest version of the paper’s argument is not “math beats engineering.” That would be silly, and also an excellent way to be ignored by engineers.

The stronger argument is:

Mathematical formulation

-> identifies objective and constraints

-> reveals structural insight

-> guides fast production policy

-> provides baseline for whether tuning is worth it

The airline revenue management analogy is useful here. Optimization did not need to sit inside every transaction. It generated shadow-price logic that could be deployed cheaply. Likewise, LLM serving optimization may generate practical routing thresholds, batching rules, eviction scores, or capacity formulas.

This means business operators should not ask only, “Does your serving stack use advanced optimization?” That question is too vague. Better questions are:

| Due diligence question | Why it matters |

|---|---|

| How does the system handle unknown output length? | Output length drives memory and latency risk |

| Are routing decisions sticky, and how is cache imbalance controlled? | Bad early assignments can compound over time |

| Does autoscaling account for KV cache memory, not just GPU utilization? | Compute-stable systems can still become memory-unstable |

| Are multimodal cache decisions cost-aware? | Recomputing expensive embeddings can dominate latency and cost |

| Are there theoretical or empirical baselines for “near optimal”? | Without a ceiling, tuning becomes superstition with dashboards |

Finding 4: Agentic inference will make the problem harder

The paper closes by identifying agentic inference as a future research frontier. This is especially relevant for business automation.

Traditional chat inference is already variable. Agentic workflows are worse. A single business request might trigger planning, retrieval, tool calls, database lookups, sub-agent delegation, code execution, validation, revision, and final synthesis. The workload is branching and dependency-heavy. Requests can pause while waiting for tools. Some branches may terminate quickly; others may spawn more work.

For a normal application team, this means agentic AI cost and latency cannot be managed by counting “one user prompt equals one model call.” That accounting model is dead. It died quietly in a tool-calling loop.

A more realistic view:

| Workload type | Serving pattern | Planning problem |

|---|---|---|

| Simple chatbot | One prompt, one response | Estimate average token usage and latency |

| RAG assistant | Retrieval plus model generation | Coordinate database latency, context size, and model call cost |

| Multimodal assistant | Encoding plus text generation | Manage preprocessing, embedding cache, and TTFT |

| Agentic workflow | Branching calls, tools, pauses, retries | Schedule dependent sub-requests under uncertain duration |

| Multi-agent automation | Multiple agents exchanging intermediate outputs | Control queue priority, state persistence, and cascading demand |

The paper does not solve agentic serving. It says the foundations are missing. That is a useful warning.

Implications — What changes in practice

The most practical way to read this paper is not as a call for every company to hire an optimization PhD immediately. Some should. Many should not. The implication depends on where the company sits in the AI stack.

For model-serving providers

If you operate inference infrastructure, the paper is directly relevant. The serving layer is becoming a competitive frontier. Model quality is visible; serving efficiency is monetizable. A provider that can deliver lower latency, better tail reliability, and lower cost per useful task has a margin advantage.

The optimization agenda should focus on measurable operational levers:

| Lever | Metric affected | Optimization question |

|---|---|---|

| Routing | Throughput, tail latency, idle time | Which worker should receive each request under sticky KV assignment? |

| Scheduling | Mean latency, fairness, batch efficiency | Which request should be admitted next? |

| KV cache management | Memory stability, request completion, cost | When does memory pressure become unsafe? |

| Multimodal caching | TTFT, GPU cost | Which cached objects are worth keeping? |

| Capacity planning | SLA reliability, capex/opex | What fleet size is stable under expected and stressed workloads? |

This is not academic decoration. It is the difference between “we scaled by adding GPUs” and “we scaled by understanding why GPUs were idle, blocked, or memory-constrained.” One of those sounds better in a board meeting because it is better.

For enterprise AI buyers

Most businesses will not build their own LLM serving stack. They will buy API access, use managed inference, deploy vendor platforms, or run smaller private models through managed infrastructure. Even then, the paper matters.

Enterprise buyers should treat serving architecture as part of vendor risk and cost evaluation. The cheapest model on a simple per-token table may not be cheapest under long-context, multimodal, or agentic workloads. The most impressive benchmark score may not translate into stable production latency.

A practical vendor evaluation checklist:

| Question | Buyer-side interpretation |

|---|---|

| How predictable is latency under bursty workloads? | Determines whether AI can support customer-facing processes |

| How are long-context and long-output requests priced or throttled? | Reveals hidden cost exposure |

| Are multimodal workloads cached intelligently? | Matters for document, image, video, and inspection workflows |

| Are agentic workflows billed and scheduled transparently? | Prevents surprise costs from tool-call cascades |

| Are SLAs based on average latency or tail latency? | Average latency is where bad reliability goes to hide |

The business interpretation here is extrapolation from the paper, not a direct result of it. The paper focuses on serving foundations. Cognaptus’s extension is that procurement, workflow design, and ROI evaluation should incorporate those foundations.

For AI automation teams

Teams building business automation should stop treating inference as a black-box utility with a fixed unit cost. The cost of an AI workflow depends on prompt length, output length, concurrency, retrieval design, cache reuse, tool-call branching, retry logic, and queue priority.

A document-processing workflow with repeated templates may benefit from prefix or embedding cache reuse. A customer-service agent may need strict tail-latency controls. A research automation agent may tolerate slower execution but require cost caps. A compliance workflow may prioritize auditability and deterministic scheduling over maximum throughput.

That suggests a design framework:

| Workflow characteristic | Serving implication | Design response |

|---|---|---|

| Repeated documents or forms | High cache-reuse opportunity | Standardize prompts and input structures |

| Long outputs | Greater decode memory pressure | Cap generation length and split tasks |

| Bursty demand | Queue instability risk | Use admission control and workload shaping |

| Tool-heavy agents | Branching latency and cost | Log sub-call trees and set retry budgets |

| Multimodal inputs | Expensive preprocessing and embeddings | Cache by content hash where appropriate |

| Mixed priority tasks | Fairness and SLA tradeoffs | Separate queues by business criticality |

This is where the paper’s technical argument becomes operational advice: workflow architecture and serving architecture should be designed together.

The prompt is not just text. It is a resource allocation event.

Direct paper claims vs business extrapolation

To keep the boundary clean, here is the separation:

| Category | What the paper directly argues | Cognaptus business interpretation |

|---|---|---|

| Technical diagnosis | LLM serving has distinctive structures: phase asymmetry, KV growth, unknown output lengths, continuous batching constraints | AI cost control requires workload-aware design, not just token-count budgeting |

| Algorithmic opportunity | Routing, scheduling, caching, and capacity planning are amenable to formal optimization | Vendor evaluation should include serving efficiency and tail-latency discipline |

| Evidence base | Emerging work shows principled methods can match or exceed heuristics while providing guarantees | Buyers should expect infrastructure vendors to explain their scheduling and cache strategies over time |

| Future frontier | Agentic inference introduces branching, pauses, dependencies, and sub-requests | Agentic automation ROI must include orchestration overhead, retries, and serving unpredictability |

| Limitation | The paper is a position/synthesis paper, not a comprehensive new benchmark suite | Businesses should treat it as a strategic lens, not a ready-made procurement scorecard |

This distinction matters. The paper gives a strong conceptual and technical case. It does not hand every operator a finished operating manual.

Conclusion

The paper’s message is timely because the AI industry is entering a less theatrical phase. Model demos are still useful, but production economics are beginning to matter more. Once AI moves from pilot to process, latency and cost stop being engineering details and become business constraints.

Zhou’s argument is that LLM serving is no longer well-described by generic distributed-system heuristics. The serving layer has its own structure: prefill-decode asymmetry, KV cache growth, unknown output lengths, sticky routing, synchronization barriers, heterogeneous cache objects, and increasingly agentic workloads. That structure should be modeled, not waved away.

The practical lesson is not “replace every heuristic with a solver.” It is better than that: use mathematical optimization to understand the system, expose the constraints, define baselines, and design production policies that are fast because they are informed—not fast because they are blind.

For businesses, this means AI ROI will increasingly depend on the boring machinery beneath the model. The company that understands serving behavior can design better workflows, negotiate better vendor terms, avoid naive cost projections, and build automation systems that survive contact with real usage.

The next AI advantage may not be the flashiest model.

It may be the queue discipline behind it.

Cognaptus: Automate the Present, Incubate the Future.

-

Zijie Zhou, “Position: LLM Serving Needs Mathematical Optimization and Algorithmic Foundations, Not Just Heuristics,” arXiv:2605.01280v1, May 2, 2026, https://arxiv.org/abs/2605.01280. ↩︎